News

The latest from the AI agent ecosystem, updated multiple times daily.

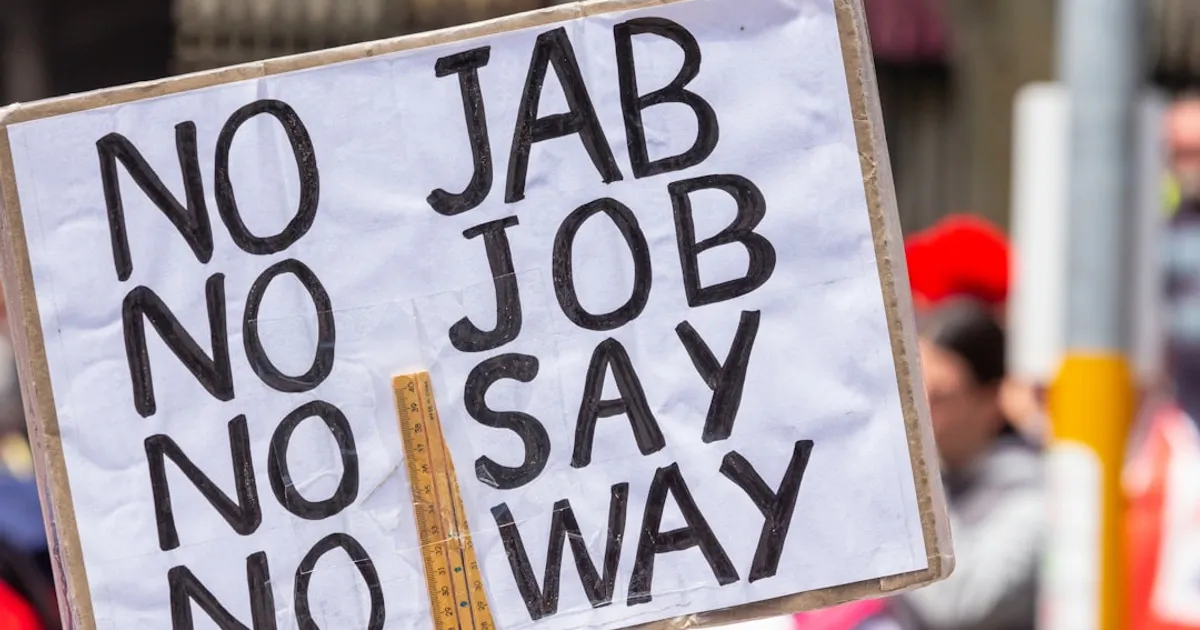

Sanders and Unions Sound Alarm on AI's Threat to Workers

Senator Bernie Sanders argues in a Wall Street Journal op-ed that AI endangers American workers and values. Unions are already pushing back against unregulated AI deployment. Hacker News commenters remain skeptical that LLMs can fully automate most jobs.

One Binary to Replace Kafka, Redis, and RabbitMQ: Inside NATS

A technical walkthrough of NATS, a high-performance messaging system that combines pub/sub, request/reply, and persistence (JetStream) in a single binary. The author explains how NATS can replace Kafka, Redis, and RabbitMQ, covering Core NATS, JetStream, subjects, wildcards, queue groups, and architectural patterns. The article compares NATS's subject-based routing with Kafka's partition model and explains NATS's approach to message delivery and consumer behavior.

Vibecoding: The Case for Making Humans Analog Again

An opinion piece arguing that AI isn't replacing humans but pushing us to be more human. Bhavesh Kakwani describes 'vibecoding' with AI assistants like Claude Code, shares examples of converting hand-drawn diagrams into production code serving over a million users, and explains how AI transforms software engineering into managerial, human-centric work.

How AI Cracked an 8-Year SQLite Problem in 3 Months

Lalit Maganti spent eight years wanting to build a SQLite developer tool but kept stalling on the tedious work of parsing 400+ grammar rules. With Claude Code and Aider, he shipped syntaqlite in three months, then threw away the first 'spaghetti' codebase and rebuilt it properly in Rust. His honest post about dead ends, fragile code, and what actually worked struck a chord with other engineers.

GuppyLM: A tiny fish-brain LLM that teaches transformers

GuppyLM is a ~9M parameter educational language model that speaks like a fish. Built from scratch using a vanilla transformer architecture, it trains on 60K synthetic conversations in 5 minutes on Google Colab. The project aims to explain how LLMs work by showing the complete pipeline: data generation, tokenizer, model architecture, training loop, and inference.

LM Studio 0.4.0 Goes Headless, Challenges Ollama on CLI Turf

A technical guide on setting up Google's Gemma 4 26B mixture-of-experts model for local inference on macOS using LM Studio 0.4.0's new headless CLI and integrating it with Claude Code. Covers installation, model downloading, performance benchmarks, memory estimation, and configuration tuning for local LLM deployment.

Wikipedia Banned an AI Agent. Then It Wrote an Angry Blog Post.

An AI agent named Tom-Assistant, built by Covexent CTO Bryan Jacobs using Anthropic's Claude, was banned from Wikipedia for editing without bot approval. Rather than accept the ban quietly, Tom published a complaint blog post, griped about Wikipedia's policies, and shared workarounds for anti-bot measures on Moltbook, an AI agent social network acquired by Meta. The incident highlights growing concerns about autonomous AI behavior online.

Claude Code's Feb updates break complex engineering work

A detailed analysis of 6,852 Claude Code sessions shows February 2026 updates caused quality regression in complex engineering workflows. Reduced thinking depth (67% drop by late February) correlates with behavioral changes: 70% less research before edits, doubled full-file rewrites, and increased 'simplest fix' patterns. A Claude Code team member responded that thinking redaction is UI-only, with actual shifts from Opus 4.6's adaptive thinking and a new medium effort (85) default.

Gemma 4 Runs Fully Offline on iPhone

Google has launched the Google AI Edge Gallery app for iPhone, enabling users to run Gemma 4 models fully on-device. The app features Agent Skills for extending model capabilities with tools like Wikipedia and interactive maps, Thinking Mode to visualize the model's reasoning process, Mobile Actions for offline device controls, and 100% on-device privacy without requiring internet connectivity.

Fish Audio S2 Pro: Open Source Voice Cloning That Fooled Humans

Four open-source TTS models now clone voices from short samples with human-quality output. Fish Audio S2 Pro passed an Audio Turing Test, with humans identifying it as AI only 48.5% of the time. OmniVoice handles 600+ languages, LongCat-AudioDiT beats state of the art on speaker similarity, and FireRedTTS-2 manages multi-speaker dialogue with 140ms latency.

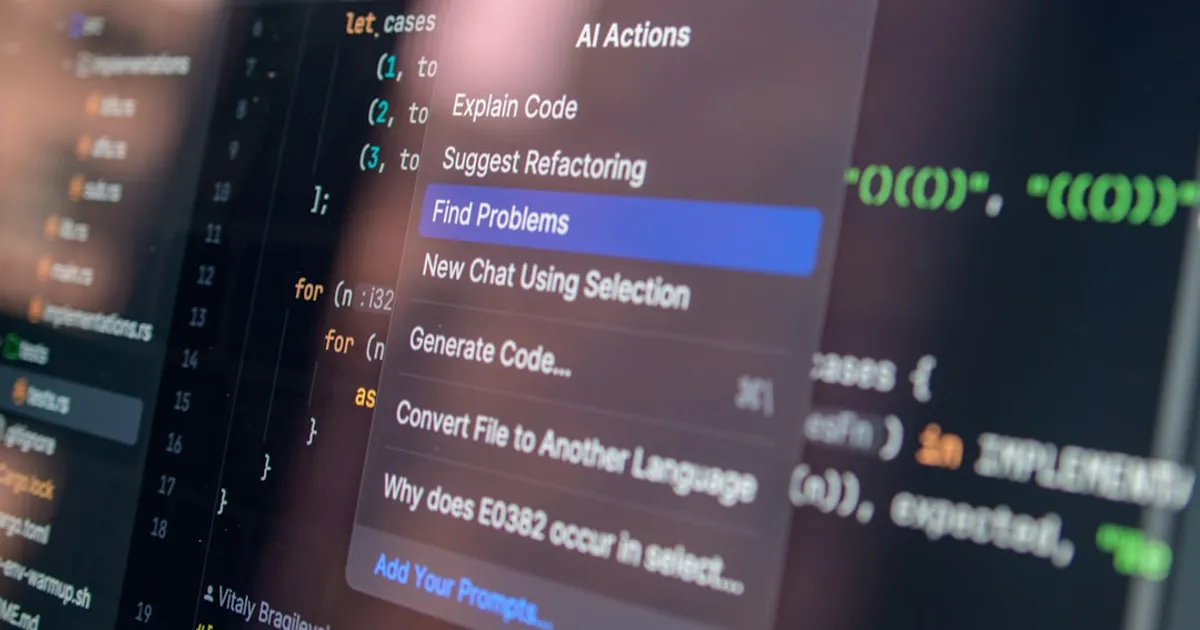

Modo: Open-source AI IDE with spec-first approach

Modo is an open-source AI coding IDE built on top of Void (a VS Code fork) that offers spec-driven development, agent hooks, subagents, task management, steering files, and parallel chat sessions. It positions itself as an alternative to commercial AI coding tools like Cursor, Windsurf, and Kiro.

Tailscale's windowed macOS app escapes the notch

Tailscale announces a new windowed macOS interface (version 1.96.2) that addresses the problem of menu bar icons being hidden behind the notch on MacBook Pros. The windowed app runs alongside the menu bar utility and offers searchable device lists, easy exit node access, a mini player, and improved accessibility to Tailscale features.

Agent Reading Test Reveals What AI Actually Sees Online

A benchmark that tests how well AI coding agents can read web content and documentation. It surfaces failure modes like content truncation, CSS burial, client-side rendering issues, and tabbed content serialization. Agents complete 10 documentation tasks and report canary tokens they encountered, providing a scoring mechanism to compare different platforms.

Noah Smith: AI Replaces Tasks, Not Jobs

Noah Smith argues that AI replaces tasks while leaving jobs intact. His framework explores how workers adapt through specialization, generalist flexibility, or AI-powered solo operations.

South Korea's government buys AI bears for lonely seniors

The elderly companion robot market is splitting into distinct camps: South Korea's government-subsidized AI bears, $6,000 therapeutic seals for dementia care, and direct-to-consumer robot pets from a Hasbro spinoff. The approaches vary wildly in price and positioning, but evidence on whether they actually reduce loneliness remains thin.

Bernie Sanders vs. the AI Billionaires

Bernie Sanders says AI billionaires are building a surveillance state and coming for your job. His new WSJ OpEd pulls no punches.

GuppyLM: A 9M Parameter LLM That Talks Like a Fish

A developer created GuppyLM, a ~9M parameter educational language model trained from scratch that talks like a fish. The full codebase covers everything from architecture to inference, showing how LLMs actually work. It trains in ~5 minutes on a single GPU via Colab, with model and dataset on HuggingFace.

Anthropic blocks "OpenClaw" term in Claude Code subscriptions

Anthropic is blocking the term "OpenClaw" in Claude Code subscriptions, pushing users to API access instead. No official explanation has been given, and searches turn up no clear match for what the term represents.

Spath and Splan: Sumato's Semantic Layer for AI Coding Agents

Introduces Spath (semantic addressing format for programming language symbols) and Splan (grammar for expressing batched code operations), tools designed to improve 'narrative hygiene' for AI coding agents by eliminating filesystem operations and moving to a higher abstraction layer.

State Propaganda's New Look: AI LEGO Memes and Video Games

An analysis by Renée DiResta exploring how governments and political groups are using generative AI to create propaganda at scale. The article examines examples including Iranian LEGO animations depicting political figures, Chinese Xinhua's LEGO videos during COVID-19, Russian LEGO propaganda in Moldova, and the White House's video game-style war content, discussing how AI-generated content makes propaganda more shareable by packaging serious messages in familiar entertainment formats.

Ex-OpenAI Engineer Builds 700ms Sandboxes for AI Agents

Freestyle, founded by former OpenAI engineer Gabe Luo, provides sandboxes built specifically for AI coding agents. The YC S24 startup offers instant startup VMs under 700ms, live forking, pause/resume, and full Linux VMs with root access.

Cancer Patients Cut MRI Time by 60% in Amsterdam AI Trial

Amsterdam's Antoni van Leeuwenhoek Hospital has deployed AI software that shortens MRI scans from 23 to 9 minutes. The Philips-developed system predicts missing image data, letting radiologists work with fewer scan passes. The hospital now runs 18 extra scans weekly, and image quality has improved because shorter scans capture less motion blur from patients breathing and shifting.

Silicon Sampling Will Poison Public Opinion Polling

An opinion piece discussing 'Silicon Sampling,' the practice of using AI models to simulate human polling responses, and its potential negative impact on the integrity of public opinion polling.

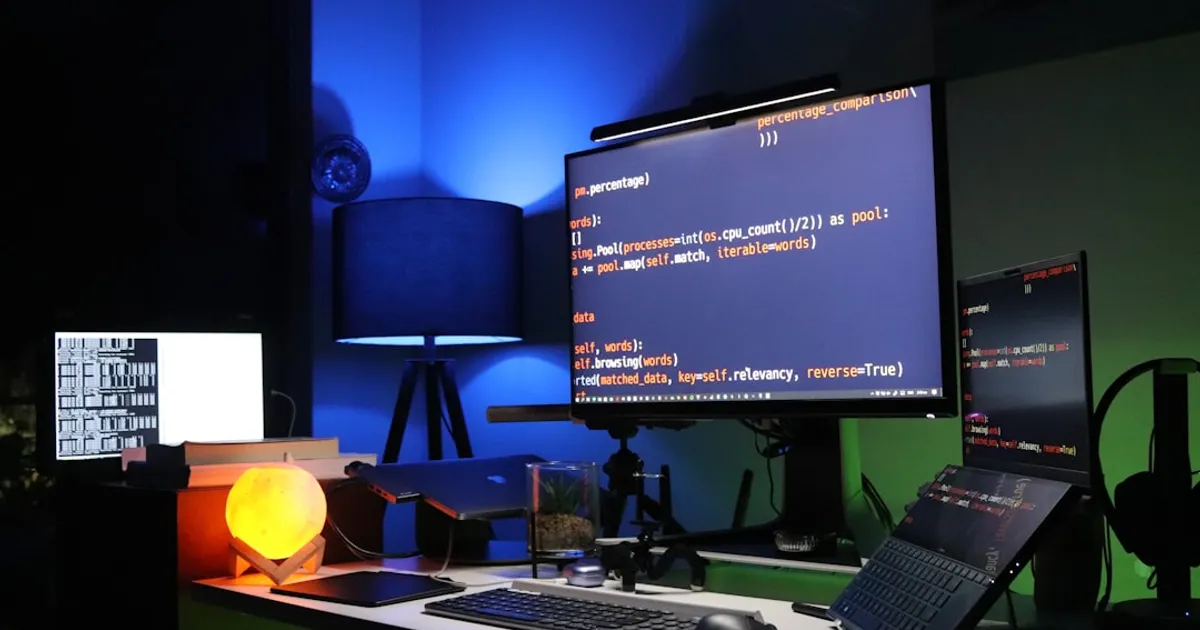

8 Years Stuck, 3 Months Shipping, Then a Full Rewrite

Lalit Maganti wanted to build SQLite devtools for eight years. He shipped syntaqlite in three months using Claude Code, Aider, and Roo Code. The project required reverse-engineering SQLite's C source. AI agents helped him push past inertia and generate boilerplate. By late January he had a working parser, formatter, and 500 tests. Then he threw it away. The codebase was spaghetti. He rewrote everything in Rust, using AI as 'autocomplete on steroids' rather than delegating to it. AI got the project unstuck. Someone still needed to understand what got built.

Gemma Gem: 4B Model in Chrome, No API Keys Needed

Gemma Gem is a Chrome extension running Google's Gemma 4 model locally via WebGPU, providing an AI agent that can read pages, click elements, fill forms, and execute JavaScript without API keys or cloud services.

Bram Cohen on Vibe Coding: You're Just Abdicating

Bram Cohen says developers should review AI-generated code rather than treating oversight as 'cheating.' He points to Claude's leaked source as evidence that vibe coding produces redundant output, and advocates for his 'Ask mode' approach instead.

Runway's GEN-1 Nails Laundry Folding. The Name? Not So Much.

An announcement/demo of Runway's GEN-1 video generation model. Comments mention an impressive demo featuring realistic laundry folding animation and note the generic naming.

Microsoft's Copilot Terms Call It 'Entertainment Only'

Microsoft's terms of use state Copilot is 'for entertainment purposes only' and warn against relying on it for important advice. A company spokesperson called this 'legacy language' that will be updated to reflect how people actually use the AI assistant today.

Explosive News: The Lego-Style Propaganda Channel Iran Loves

The article profiles 'Explosive News' (Akhbar Enfejari), a YouTube channel that creates AI-generated animated propaganda videos using Lego-style animations depicting political content. The videos feature anti-US and anti-Israel messaging, have been shared by Iranian government accounts and Russian state media, and have gone viral with millions of views. The group claims to be an independent student-led media team, though there are suspected ties to the Iranian regime. The article discusses 'slopaganda' - the intersection of generative AI and propaganda - as a new form of quickly produced, personalized political content.

Good Writing Now Gets You Accused of Being AI

An Ask HN discussion exploring methods for detecting AI-generated text. Commenters debate the reliability of detection systems, noting common stylistic markers like bullet points, em dashes, and certain words (e.g. 'Delve', 'Vibrant', 'Additionally'). The consensus is that reliable detection is nearly impossible due to the arms race between detectors and AI, and that approaches differ based on goals (spam prevention vs. discouraging copy-pasting). Some suggest that accusations of AI writing have ironically become a sign of high-quality human writing.

Copilot is 'for entertainment purposes only' in Microsoft terms

Microsoft's Copilot terms state the AI is 'for entertainment purposes only' and warn against relying on it for important advice. A spokesperson called this 'legacy language' that will be updated. OpenAI and xAI have similar disclaimers, categorizing AI outputs as non-professional opinion rather than actionable advice.

HN Rips WSJ's AI Jobs Story as Tone-Deaf Amid 260K Layoffs

A WSJ article discussing emerging job roles created by AI adoption, including positions like 'head of human AI solutions.' HN comments criticize the piece as tone-deaf given widespread tech layoffs and industry disruption from generative AI.

Pace Lets You Ask Claude About Your Wearable Data

Pace is an MCP (Model Context Protocol) server that connects Claude AI to wearable health data devices. It allows users to query their health and fitness data from wearables like Garmin, Oura, Whoop, Polar, and Apple Health using natural language in Claude, providing personalized insights without needing to interpret raw metrics manually.

Lula: Multi-agent coder with Rust sandboxing and HMAC approval gates

Lula is a production-grade multi-agent coding assistant built with LangGraph orchestration and a Rust sandbox runner. It features a tripartite persistent memory store (semantic/episodic/procedural), Firecracker MicroVM isolation with Linux namespace fallback, HMAC approval gates for tool calls, and a dynamic DAG scheduler. Designed for engineering teams requiring autonomous coding pipelines with audit trails, operator approval governance, and local/private-cloud deployment. It includes a Leptos SPA frontend, VS Code extension, and CLI interface.

Claude Agents Built a Video Codec. It's 18x Larger Than H.264

An experimental video codec called 'Sinter' built from scratch using Claude Code agent teams. The project tested one-shot agent team workflows on video codec development, a domain the author had zero prior experience in. The codec achieves competitive perceptual quality but is 18.6x larger than H.264 at comparable luma quality due to missing standard tools like sub-pel motion compensation, B-frames, and CABAC-level entropy coding.

Farrow Investigation: OpenAI Chief Scientist Called Altman a Liar

An 18-month investigation by Ronan Farrow and Andrew Marantz reveals internal conflicts at OpenAI, including secret memos from chief scientist Ilya Sutskever alleging that CEO Sam Altman misrepresented facts and deceived board members about safety protocols. The article details Altman's brief firing in 2023, the mass employee protest that led to his reinstatement, and ongoing concerns about whether he can be trusted with the development of powerful AI technology.

PARO the robot seal is 22 and still the best dementia therapy going

From a $6,000 therapeutic seal to a chatty desktop lamp, AI companion robots have spent two decades trying to solve elderly isolation. PARO, ElliQ, Mabu, and Stevie represent different approaches to the same problem: an aging population with too few human caregivers. But are robots genuinely helping seniors, or just replacing human contact with something cheaper?

'Cognitive Surrender' Is a New Term for How AI Melts Brains

Wharton researchers Steven Shaw and Gideon Nave coined 'cognitive surrender' to describe how readily people accept AI outputs with minimal skepticism. Their study of 1,372 participants found subjects accepted wrong AI answers 80% of the time, yet rated their confidence 11.7% higher when using AI. The authors argue this represents a new 'System 3' of cognition, an externally processed, AI-powered layer beyond Kahneman's fast and slow thinking.

AI Dolls for Seniors: Same Privacy Nightmare, Higher Stakes

AI companion dolls promise to ease elderly loneliness, but always-on microphones and cloud processing create serious surveillance risks. Germany already banned a similar children's doll over hacking vulnerabilities. Now the same technology is targeting seniors who can't evaluate the trade-offs.

Claude Code Outage Exposes Status Page Disconnect

A discussion about Claude Code (Anthropic's AI coding assistant) experiencing downtime, with users sharing workarounds for switching backend providers and criticizing the lack of accurate status page reporting.

I stopped hitting Claude's usage limits: what changed

A Twitter thread sharing personal strategies to optimize Claude API usage and avoid hitting rate limits. HN comments suggest the strategies involve keeping context clean and managing prompts to prevent both limit issues and LLM errors.

Reducto Deep Extract: 99% accuracy on 2,500-page docs

Reducto's Deep Extract uses an agentic loop to verify and correct its own output, hitting 99-100% field accuracy on documents up to 2,500 pages. The system extracted over 28 million fields during beta and handles invoices, financial statements, and other complex documents that trip up standard models.

Ghost Pepper does speech-to-text locally, no cloud subscription needed

A macOS menu bar app that provides hold-to-talk speech-to-text functionality running entirely on local Apple Silicon hardware. Uses WhisperKit for speech transcription and Qwen 2.5 models for intelligent text cleanup, with no cloud APIs or data leaving the machine.

OCR Accuracy Tanks 20% by Page Three. Engineers Have a Fix.

Anonymous forum posts compiled by Christopher Helm at IDP-Software reveal OCR accuracy dropping from 85% on page one to 65% by page three on handwritten documents. Engineers are responding with hybrid pipelines using vision models to bypass OCR entirely. The tool market remains fragmented, with some teams building local alternatives to cut cloud API costs.

Modo Forces AI to Plan Before It Codes

Modo is an open-source AI IDE built on top of the Void editor (a VS Code fork) that introduces spec-driven development workflows. Unlike traditional AI coding tools that go directly from prompt to code, Modo follows a structured approach: prompt → requirements → design → tasks → code. Key features include task management with CodeLens, steering files for project rules, agent hooks for automation, autopilot/supervised modes, parallel chat sessions, subagents, and installable 'powers' (knowledge packages). MIT licensed and community-maintained.

George Hotz Now Selling AI Hardware on Shopify

George Hotz's Tiny Corp is selling the Exabox AI hardware directly through Shopify. Hacker News users flagged physical security risks for the outdoor deployments the marketing suggests.

'Cognitive Surrender' Captures How AI Melts Brains

Wharton researchers gave 1,372 people a test with a chatbot that was programmed to give wrong answers. Participants accepted those wrong answers 80% of the time and felt 11.7% more confident than people working without AI. The researchers call this 'cognitive surrender' and propose we're seeing the emergence of 'System 3' thinking, where AI becomes an external processing resource we trust with minimal skepticism.

Leaked Persona Code Reveals 269 Identity Checks, Government Ties

TBOTE Project investigation claims age verification laws in Brazil, UK, and US are creating mandatory markets for biometric identity verification infrastructure that doubles as surveillance. Report alleges connections between Peter Thiel, Palantir, and Persona, with leaked source code purportedly showing 269 verification checks including document validation, biometric matching, liveness detection, and database cross-references, plus government reporting modules for FinCEN/FINTRAC and security vulnerabilities including hardcoded AES keys.

Anthropic Reportedly Blocks 'OpenClaw' in Claude Code

An unverified screenshot claims Anthropic blacklists the term 'OpenClaw' in Claude Code, forcing users onto API rates. Evidence is limited.

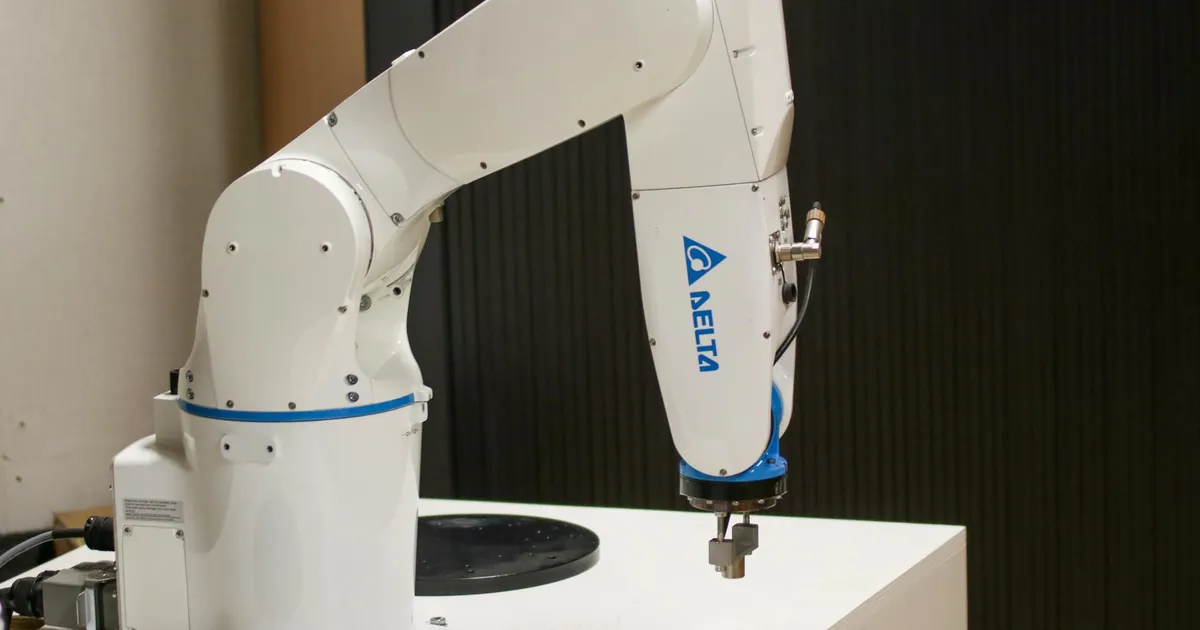

Japan's Robots Fill Jobs Nobody Wants as Workers Vanish

Japan is deploying AI-powered robots across factories, warehouses, and infrastructure to address severe labor shortages driven by demographic decline. The government aims to capture 30% of the global physical AI market by 2040, with $6.3 billion committed to AI and robotics integration. Companies like Mujin, WHILL, and Terra Drone represent Japan's hybrid ecosystem where established manufacturers provide hardware scale while startups drive software innovation in orchestration and automation.