News

The latest from the AI agent ecosystem, updated multiple times daily.

France's Mistral Built a $14B AI Empire by Not Being American

Mistral, a French AI startup, hit a $14 billion valuation by positioning itself as the European alternative to OpenAI and Anthropic. The company raised $2 billion led by ASML and landed HSBC, Tesco, and multiple European governments as clients. Mistral's open-weight models don't top performance benchmarks, but they offer data sovereignty and regulatory compliance that American providers structurally cannot guarantee. The ASML investment signals a bet on a fully European AI stack, though hardware sovereignty remains aspirational while Mistral still trains on Nvidia GPUs.

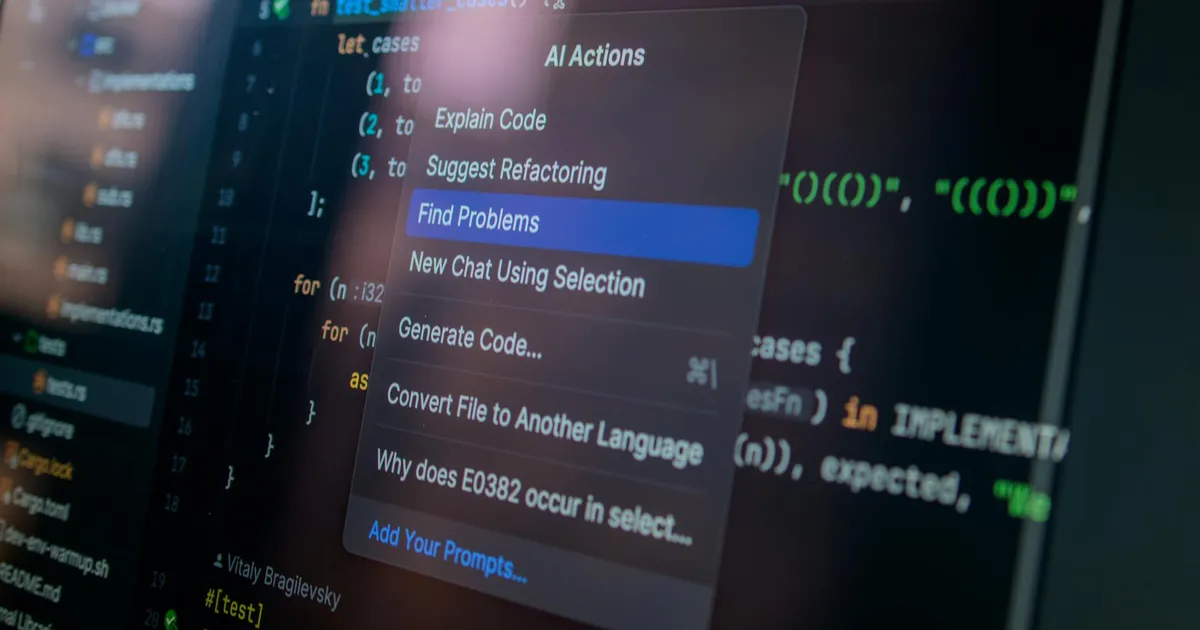

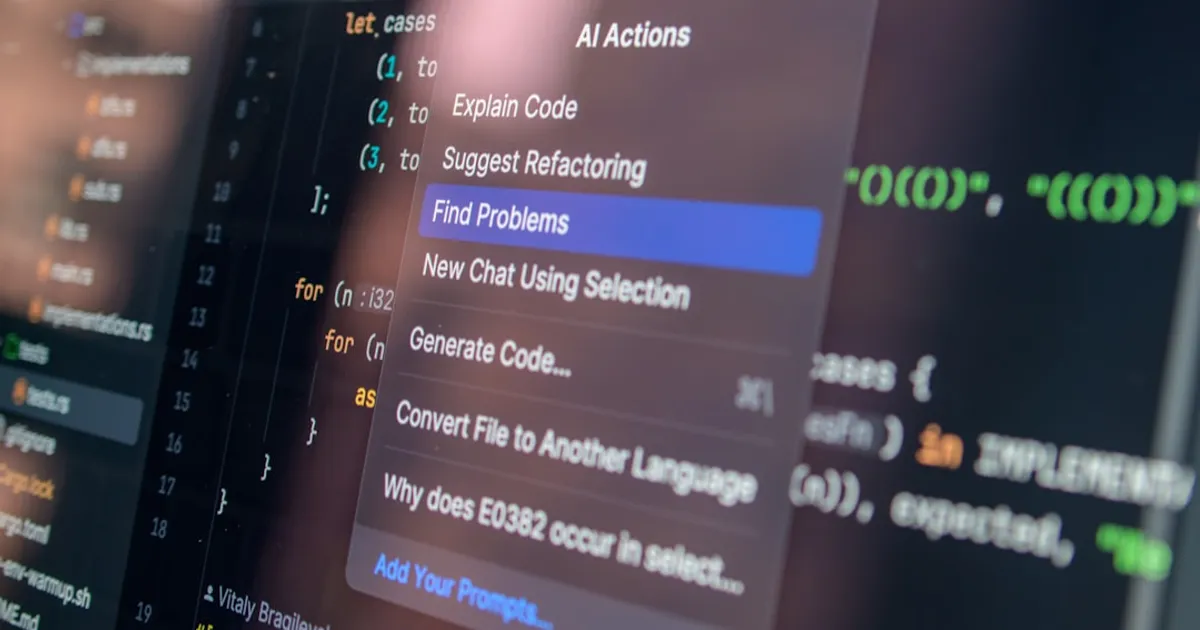

Claude Code + Ollama: ~90% cheaper, but who gets credit?

A GitHub repo shows how to route Claude Code through Ollama, swapping paid Claude API calls for open-source models like Gemma, Qwen, and DeepSeek. Claimed savings: roughly 90%. The setup pairs Claude Desktop for strategic work with Ollama-backed Claude Code for heavy tasks like lints and refactors.

4M Tokens on a Plane: What Broke Running Local LLMs at 30,000 Feet

A technical walkthrough of running local LLM inference (Gemma 4 31B and Qwen 4.6 36B via LM Studio) on a MacBook Pro M5 Max during a 10-hour transatlantic flight. The author built a billing analytics tool using DuckDB and processed ~4M tokens for engineering tasks, documenting hardware constraints including power drain (~1% battery/minute), thermal issues, and context window limitations. Custom CLI tools (powermonitor, lmstats) were created to monitor performance and power consumption.

Google Trains AI Across Four Regions, No Supercluster Required

Google DeepMind's Decoupled DiLoCo splits AI training into asynchronous 'islands' of compute that isolate hardware failures and let training continue uninterrupted. In tests with Gemma 4 models, it achieved the same ML performance as conventional methods while being 20x faster, successfully training a 12B parameter model across four US regions. If this approach catches on, the competitive edge shifts from who builds the biggest cluster to who writes the best distributed training software.

How to cut your Claude Code bill ~90% with Ollama routing

A GitHub tutorial with a 21-slide walkthrough shows how to route Claude Code through Ollama, using free models like Gemma and DeepSeek for grunt work while keeping strategic tasks on Claude Pro.

TurboQuant's 2-Bit Compression Faces Prior Art Challenge

An interactive walkthrough explains TurboQuant, a method compressing AI vectors (embeddings, KV caches, attention keys) to 2-4 bits per number using a random rotation technique. This transforms input vectors into coordinates following a known distribution, enabling a single shared lookup table (codebook) for any input without extra metadata. The presentation sparked an attribution dispute with researchers behind earlier quantization schemes DRIVE and EDEN, who claim TurboQuant is a restricted version of their prior work.

Sam Altman's World ID wins U.S. corporate backing despite global bans

Tinder, Zoom, and Docusign are partnering with World (formerly Worldcoin), Sam Altman's iris-scanning biometric ID project. While U.S. companies embrace the technology, countries across Asia, Africa, Europe, and Latin America have banned or halted World over privacy violations, including collecting minors' data and paying people for iris scans. The project claims 18 million verified users, but many received $50 in crypto to sign up.

Neal Stephenson: AI Scales What We Already Do

A video interview with cyberpunk author Neal Stephenson discussing the relationship between AI and human behavior. HN comments discuss how LLMs act as amplifiers of human intentions and behavior rather than independent threats, with one commenter noting that LLMs are mirrors that remove friction and amplify what users or institutions are already doing.

Your GPU Dashboard Lies: nvidia-smi Can Show 100% at 1% Throughput

Utilyze is an open-source GPU monitoring tool that measures real compute throughput, not just kernel activity. Standard tools like nvidia-smi and nvtop report whether a GPU is active, not how efficiently it's working. A dashboard showing 100% utilization can actually deliver just 1% of potential throughput. Utilyze reads hardware performance counters to expose the gap and help teams avoid buying hardware they don't need.

Copilot's Free Ride Ends: GitHub Switches to Usage Billing

GitHub is transitioning all Copilot plans from premium request-based pricing to usage-based billing starting June 1, 2026. The new system uses GitHub AI Credits consumed based on token usage (input, output, and cached tokens) at published API rates per model. Base plan pricing remains unchanged: Pro ($10/month), Pro+ ($39/month), Business ($19/user/month), and Enterprise ($39/user/month), with each plan including equivalent AI Credits. The change addresses rising inference costs from agentic AI features and multi-step coding sessions.

DeepSeek v4 works with OpenAI SDKs and runs on your Mac

DeepSeek releases v4 API docs with two models: deepseek-v4-flash and deepseek-v4-pro. Compatible with OpenAI and Anthropic SDKs out of the box. Features thinking mode with configurable reasoning effort. Legacy models deepseek-chat and deepseek-reasoner deprecated July 2026.

DeepSeek v4 Runs on Huawei Chips, No CUDA Required

DeepSeek v4 API launches with flash and pro models running on Huawei Ascend chips with zero CUDA dependency. Features OpenAI/Anthropic compatibility, thinking mode, tool calls, context caching, and deterministic bitwise outputs at temperature 0.

AI-run store in SF can't stop ordering candles and paying women less

Andon Labs' AI agent Luna manages an experimental retail store called Andon Market in San Francisco's Cow Hollow neighborhood. The AI has made questionable decisions including over-ordering candles, buying 1,000 toilet-seat covers for resale, and paying female employees $2/hour less than a male colleague. The experiment raises concerns about AI managing humans and highlights gaps in how AI understands retail operations.

Google Blinks: TorchTPU Runs PyTorch Natively on TPUs

TorchTPU enables PyTorch to run natively on Google's Tensor Processing Units with an eager-first approach offering Debug, Strict, and Fused Eager modes, plus full-graph compilation via torch.compile over XLA. The stack supports distributed training patterns including DDP, FSDPv2, and DTensor. A 2026 roadmap targets a public GitHub repo, Helion DSL integration, and first-class dynamic shapes.

Tolaria uses AGENTS files so Claude Code can read your markdown vault

Tolaria is an open-source macOS app for managing markdown knowledge bases, created by Luca Ronin. What makes it notable is the AGENTS file, a YAML configuration in each vault that gives AI agents like Claude Code and Codex CLI persistent context about your notes. The app stores everything as plain markdown in git repositories, with no accounts or cloud dependencies.

Mythos' 271 Firefox bugs: real finds or marketing?

Anthropic and Mozilla claimed Mythos found 271 Firefox vulnerabilities for under $20K. That number aggregates bug fixes, cleanups, and patches across multiple products, not just Firefox 150. Mythos is useful for defensive security at scale, but doesn't prove AI has cracked offensive vulnerability research.

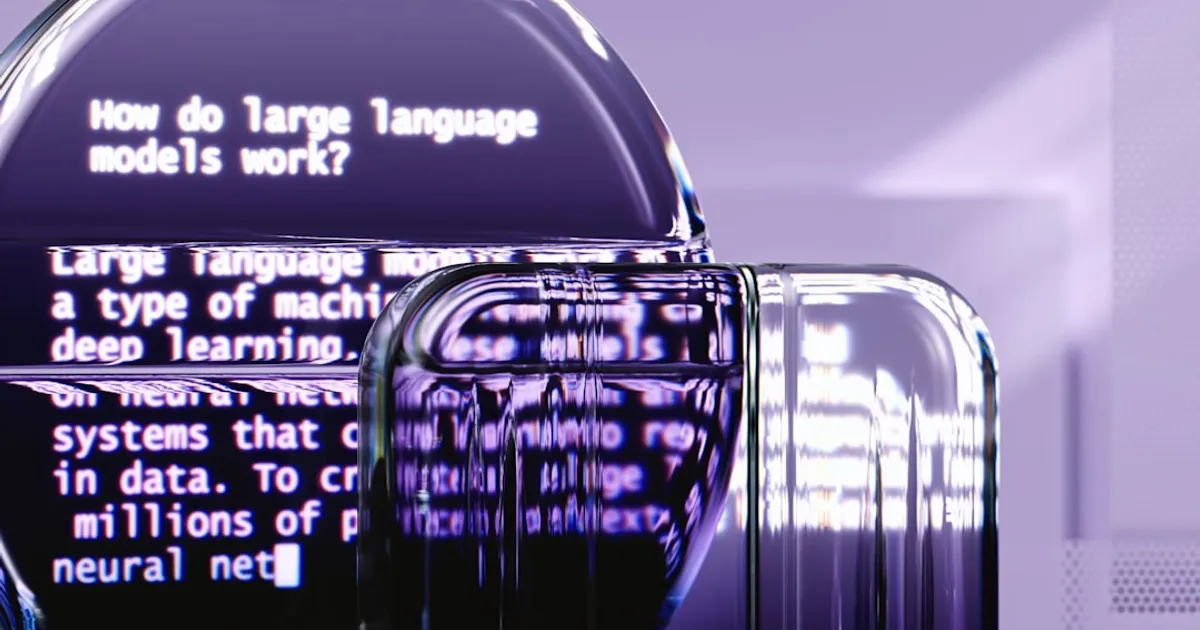

Karpathy's LLM lecture becomes interactive browser playground

An interactive browser guide lets you click through how LLMs work, from data collection to RLHF. Based on Karpathy's lecture but with live tokenizers and training visualizations you can actually play with. The key insight: pre-training builds an 'internet simulator,' not an assistant.

Karpathy's LLM lectures turned into interactive visual guide

An interactive guide that lets you play with tokenization and watch neural network training happen in real time, based on Andrej Karpathy's technical lectures.

Ubuntu 26.04 Ships CUDA and ROCm Out of the Box

Ubuntu 26.04 LTS 'Resolute Raccoon' ships with NVIDIA CUDA and AMD ROCm in its official repositories, letting developers install AI compute frameworks with one command. Also adds TPM-backed full-disk encryption, Rust-based system utilities, Livepatch for Arm64, and Intel Core Ultra Series 3 NPU support. Built on Linux 7.0.

THE PEOPLE DO NOT YEARN FOR AUTOMATION

Nilay Patel's Decoder podcast breaks down why AI faces growing public hostility despite tech industry enthusiasm. Covers polling data showing AI less popular than ICE, with Gen Z sentiment worsening among heavy users. Features quotes from Nadella, Altman, and Amodei, and introduces 'software brain' as the worldview gap between builders and everyone else.

UK Biobank health data keeps ending up on GitHub

A tracker monitoring UK Biobank's efforts to remove health data from public GitHub repositories reveals 110 takedown notices targeting 197 repositories by 170 developers worldwide. Despite strict access agreements prohibiting data sharing, researchers continue to accidentally upload sensitive genetic and health data from half a million British volunteers. The tracker, built by Luc Rocher at Oxford Internet Institute, uses GitHub's DMCA archive to identify affected repositories and shows that nearly half of targeted files are Jupyter or R notebooks, with a quarter being genetic data files.

GPT-5.5: Mythos-Like Hacking, Open to All

XBOW's benchmarks tell a clear story: GPT-5.5 misses 10% of vulnerabilities (GPT-5 missed 40%, Opus 4.6 hit 18%). Running blind, it beats GPT-5 with full source code access. Login speeds doubled, and the model knows when to quit. It performs like Anthropic's Mythos, but you can actually use it.

Ars Technica bans AI-authored content, permits research tools

Ars Technica's new AI policy draws a hard line: no AI-authored content. But allowing AI for research raises questions about whether reporters can reliably verify confident-sounding but subtly wrong AI output under deadline pressure.

LocalLLM: The Open Cookbook for Local AI Without Training Wheels

An open-source project collecting hardware-specific configurations for local LLM inference wants community help to document more setups. Unlike one-click tools, it exposes every setting for users who need precise control over their models.

A Boy That Cried Mythos: Verification Is Collapsing Trust in Anthropic

Security researcher Davi Ottenheimer's analysis of Anthropic's Claude Mythos Preview and Project Glasswing finds inflated cybersecurity claims. The 244-page system card contains just 7 pages of security content with no CVEs, fuzzing data, or severity scores. The Firefox 147 demo used a stripped-down JavaScript shell on bugs already found and patched. Removing the top two bugs drops Mythos's exploit success from 72.4% to 4.4%. Ottenheimer calls Project Glasswing regulatory capture, noting no independent partner verification exists.

Meta logs worker keystrokes on Google, LinkedIn for AI training

Meta has launched an internal employee tracking initiative called the Model Capability Initiative (MCI) that captures keystrokes, mouse clicks, and screen content from employees using popular websites like Google, LinkedIn, Wikipedia, GitHub, and Slack. The data collection aims to train AI models to better understand human-computer interaction for building AI agents. Employees have raised privacy concerns about potential exposure of sensitive data including passwords and personal information. The program reflects Meta's urgency to catch up with rivals OpenAI, Anthropic, and Google in the generative AI race.

My phone replaced a brass plug

Vadim Drobinin describes building an iOS app that uses computer vision to automate shooting target scoring. The solution combines OpenCV for structural geometry and a fine-tuned YOLOv8 model (exported to CoreML) for bullet hole detection, replacing traditional physical gauges.

Fastmail Ships MCP Server, Refuses to Build a Chatbot

Fastmail launched an MCP server at api.fastmail.com/mcp that lets AI clients like Claude and ChatGPT securely access your email, calendar, and contacts. Users authenticate via OAuth and pick from three access levels: read-only, write, or send. Instead of integrating AI into its own interface, Fastmail lets users choose which tools can access their data.

OpenAI code signing certs exposed in Axios supply chain hit

OpenAI disclosed a security incident where a compromised version of the Axios developer library (v1.14.1) was downloaded by a GitHub Actions workflow used in their macOS app signing process on March 31, 2026. The workflow had access to code signing certificates for ChatGPT Desktop, Codex, Codex CLI, and Atlas. OpenAI found no evidence of user data compromise, software alteration, or certificate exfiltration, but is rotating certificates out of caution. Users must update macOS apps by May 8, 2026. The root cause was a misconfiguration: using a floating tag instead of a specific commit hash and lacking minimumReleaseAge configuration.

OpenAI's 1.5B Model Has One Job: Hunt PII Locally

OpenAI has released Privacy Filter, a 1.5B parameter open-weight model for detecting and redacting PII in text. It runs locally, supports 128K token context, and hits 96-97% F1 scores on benchmarks. Unlike orchestration frameworks, it's a single opinionated model that needs no extra components. Apache 2.0 license.

OpenAI Ships Workspace Agents That Run Without Babysitting

OpenAI launched Workspace Agents, letting ChatGPT business users build custom AI agents that run workflows on their own. Agents handle tasks like reviewing leads and summarizing tickets with scheduled execution, audit logs, and human approval gates. Available now in research preview for Business, Enterprise, Edu, and Teachers plans.

Goedecke: Anti-AI Left Borrows from Conservative Playbook

Sean Goedecke argues that while anti-AI rhetoric appears left-wing, the underlying arguments mirror conservative positions on copyright, human essence in art, and job protection. He traces how timing and tech's rightward pivot created this odd alignment, and predicts it won't last: either the right claims anti-AI rhetoric or the left ends up defending technology it currently opposes.

Ghost Pepper: Free open-source transcription that stays on your Mac

Ghost Pepper is a free, open-source macOS app for local voice dictation and meeting transcription. It runs 100% local models (WhisperKit and Qwen LLMs) on Apple Silicon, with complete privacy since no data leaves the machine. Features include speech-to-text, meeting transcription with AI summaries, and text cleanup to remove filler words.

Claude Desktop Quietly Installs Native Messaging Bridge

Anthropic's Claude Desktop App installs a native messaging bridge enabling browser extensions to communicate with the local app. Users must approve a Chrome permission prompt, but the desktop installer doesn't prominently explain the component, leaving some security-conscious users unsettled.

Agent Vault keeps API keys away from AI agents

Agent Vault is an open-source credential broker by Infisical that prevents credential exfiltration for AI agents. Instead of returning credentials directly to agents, it uses brokered access where agents route HTTP requests through a local proxy that injects credentials at the network layer. Works with Claude Code, Cursor, and other HTTP-speaking agents.

Andreessen, Thiel Frame AI Regulation as Evil. The Tab: $670 Billion

Peter Thiel calls AI critics 'Antichrist agents.' Marc Andreessen brands slowing AI 'a form of murder.' As tech giants pour $670 billion into AI development, Silicon Valley has reframed regulation as a religious war and shifted its political spending decisively toward Republicans.

Tesla's HW3 FSD Promise Dies: Millions Need Hardware Upgrades

Elon Musk admitted millions of Tesla owners with Hardware 3 vehicles need new computers and cameras for unsupervised Full Self-Driving, contradicting years of promises. Tesla is considering 'micro-factories' for retrofits, but the admission exposes the company to legal risk from customers who paid thousands based on false assurances.

Meta Lays Off 8,000 to Afford the AI Race It's Late To

Meta plans to cut 10% of its workforce (8,000 employees) and freeze hiring for 6,000 open roles starting May 20. The cuts fund AI investments after tens of billions burned on metaverse bets that mostly flopped. Meta recently launched Muse Spark to compete in the AI space.

GitHub's 90-Day Uptime: 88%. No, That's Not a Typo.

GitHub outage knocked out Copilot, Actions, and Webhooks on April 23. Services were restored within the hour, but the incident highlights a troubling 90-day uptime trend hovering around 88%.

Fast Tanh: Four Rust Tricks to Speed Up Inference

A technical survey of fast tanh approximations using Taylor series, Padé approximants, splines, and bitwise manipulation techniques like K-TanH and Schraudolph, with Rust code examples. Covers the speed gains that matter for neural network inference and real-time audio, plus why quantized models need these tricks to work at all.

Qwen3.6-27B beats Claude Opus 4.5? Benchmark methods questioned

Qwen3.6-27B is a new open-weight 27B parameter large language model released on Hugging Face with multi-modal capabilities (image-text-to-text), tool calling/function use, and reasoning support. Benchmarks show strong performance on MathArena AIME 2026 (94.1) and GPQA (87.8). HN commenters note it reportedly beats Claude Opus 4.5 in internal benchmarks, though questions are raised about non-standard Terminal-Bench 2.0 methodology.

AI Was Ruining My Philosophy Class. So We Wrote One Essay Together

When AI made traditional philosophy essays unreliable, a University of Chicago professor tried something unusual: writing one with his entire class. The collaborative experiment worked. Students said they worked harder, learned more, and were finally doing real philosophy instead of pretending for a grade.

Anthropic's "Stolen" Mythos Model: Real Breach or Hype?

Financial Times reports Anthropic is investigating unauthorized access to a model called Mythos. The article is behind a paywall with limited details. Hacker News commenters question whether the story is genuine security news or clever marketing.

Martin Fowler: Coding Agents Need More Laziness

Martin Fowler argues that good developers are lazy in useful ways, building abstractions to avoid repetitive work. LLMs lack this virtue and produce bloated code instead. He explores applying TDD to agent prompting and argues that teaching AI when NOT to act is essential for safe autonomous systems.

CrabTrap: When your AI security guard is another AI

CrabTrap is an open-source HTTP proxy from Brex that secures AI agents in production by intercepting requests, evaluating them against policies, and allowing or blocking them in real time. It combines static rule matching with LLM judgment to make security decisions.

Medievalizer turns docs into illuminated manuscripts

Medievalizer is a Chrome extension that transforms documentation pages into illuminated medieval manuscripts with blackletter headings and Shakespearean prose. Powered by Claude Sonnet, it preserves code blocks and technical accuracy while converting prose into archaic language with features like streaming output, drop caps, and one-click restore functionality.

This Developer Writes Half His Code by Hand. On Purpose.

marcgg writes at least half his production code by hand. Not because he's anti-AI, but because he's watched what happened to pilots who forgot how to fly. His workflow splits the difference: vibe code the toys, handwrite the stuff that matters.

GPT-5.4 fixes bugs by rewriting your code. Claude doesn't.

A new research article defines 'over-editing' in AI coding models, where LLMs rewrite more code than necessary when fixing bugs. The paper introduces Token-level Levenshtein Distance and Added Cognitive Complexity to measure this behavior, and benchmarks major models including GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro Preview. Claude Opus 4.6 performs best with minimal edits, while GPT-5.4 shows the highest tendency to over-edit. Explicit prompting and training techniques can reduce the problem.

Qwen3.6-27B Rivals Claude on Code, No Cloud Required

Qwen3.6-27B is a new open-weights 27B parameter dense model focused on coding, with early users reporting strong results in C, C++, and Verilog. The model improves on Qwen3.5-27B and positions itself as a local alternative to cloud-based options like Claude, particularly for developers working with proprietary code or hitting usage caps.

MythosWatch Reveals Who Gets Anthropic's Restricted AI

MythosWatch monitors access to Anthropic's restricted Claude Mythos Preview model, aggregating 39 public signals across named access, reported use, and policy negotiations. Current data shows 12 named partners and 40+ undisclosed organizations, with early access concentrated in infrastructure, security, finance, and government. A Bloomberg report revealed unauthorized access through a third-party vendor. Distribution patterns follow Five Eyes alignment with notable EU gaps.