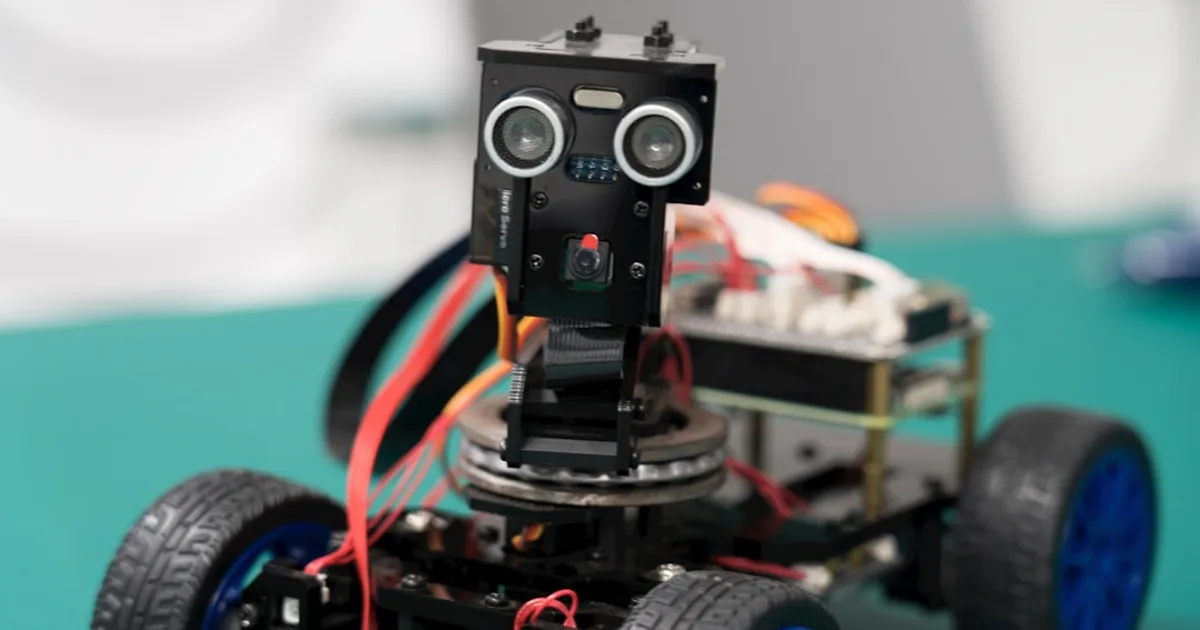

Indraneel Patil and Bruce Kim decided to build a robot vacuum instead of buying one. Three months of part-time work later, they had RoboVac, a $300 DIY cleaner that steers itself using only a camera and a convolutional neural network. No LiDAR, no mapping. Just a webcam streaming frames to a laptop, which runs inference and sends navigation commands back to the robot.

The approach is behavior cloning. They teleoperated the robot around their home, recording image-action pairs, then trained a CNN to predict discrete commands like forward, reverse, turn, and stop. The results are mixed. The robot learned to back away from obstacles, which is genuinely useful. But it can't tell free space from blocked space. It oscillates in open areas like the kitchen. It gets stuck often.

Patil tried data augmentation and ImageNet pre-training to fix the high validation loss. Neither helped. The problem, he writes, is that a single image frame doesn't carry enough signal for the network to learn from. There's no temporal history, no sense of motion or depth. The network can't learn turning behaviors because static images don't encode direction changes well. It's a fundamental limitation of the single-frame approach, not a data quantity problem.

Hacker News commenters pointed out the gap: without SLAM or spatial mapping, you can't systematically clean a room. Commercial robots use simultaneous localization and mapping to track where they've been and ensure coverage. One commenter shared ORBSlammer, a localization service built on ORB-SLAM3 that could add mapping capabilities to projects like this. Patil and Kim are upfront about their robot's limits. The vacuum suction is weak, there's no autonomous charging, and it needs babysitting while cleaning. For $300 in parts, it's a clear demonstration of where camera-only navigation breaks down.