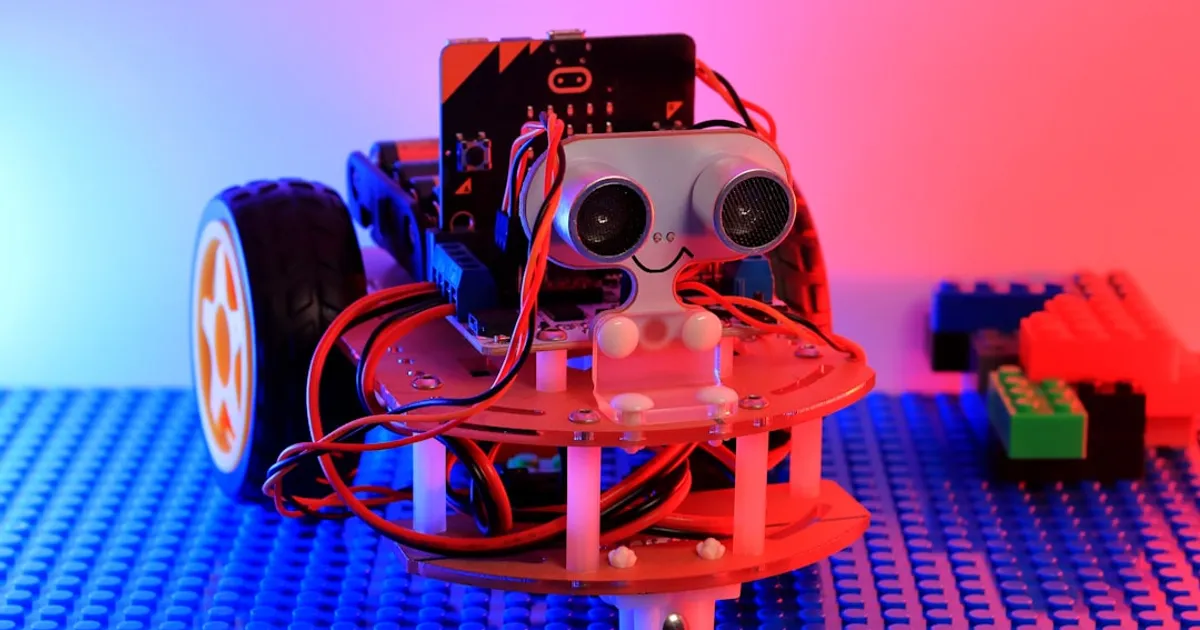

Bruce Kim and Indraneel Patil wanted a robot vacuum. Instead of buying one, they built their own for about $300 using off-the-shelf parts. The robot uses a simple camera and a CNN trained with behavior cloning to decide where to move. No LiDAR, no fancy sensors, just vision. To keep costs down, there's no onboard compute. The robot streams video to a laptop, which runs agents and sends commands back.

The steering model struggles. The robot reverses when it shouldn't, oscillates in open spaces like the kitchen, and gets stuck in corners. Kim and Patil tried data augmentation and ImageNet pre-training to fix the high validation loss. Neither worked. Overfitting wasn't the culprit. The dataset itself lacks the signal needed for the network to learn proper movement. Hacker News commenters noted the validation curve suggests memorization, not actual learning.

The hardware works. The software doesn't quite. There's no autonomous charging, the vacuum suction is weak, and the robot needs supervision because it gets stuck. Commercial robots from iRobot and Roborock solve these problems with vSLAM and dedicated neural processing units that run depth estimation locally on chips like Intel's Movidius VPU. Commenters suggested Apple's Depth Pro model could help with depth perception, or using VLMs to bootstrap better training data. For a three-month part-time project that hit its budget target, it's an honest attempt at something genuinely hard.