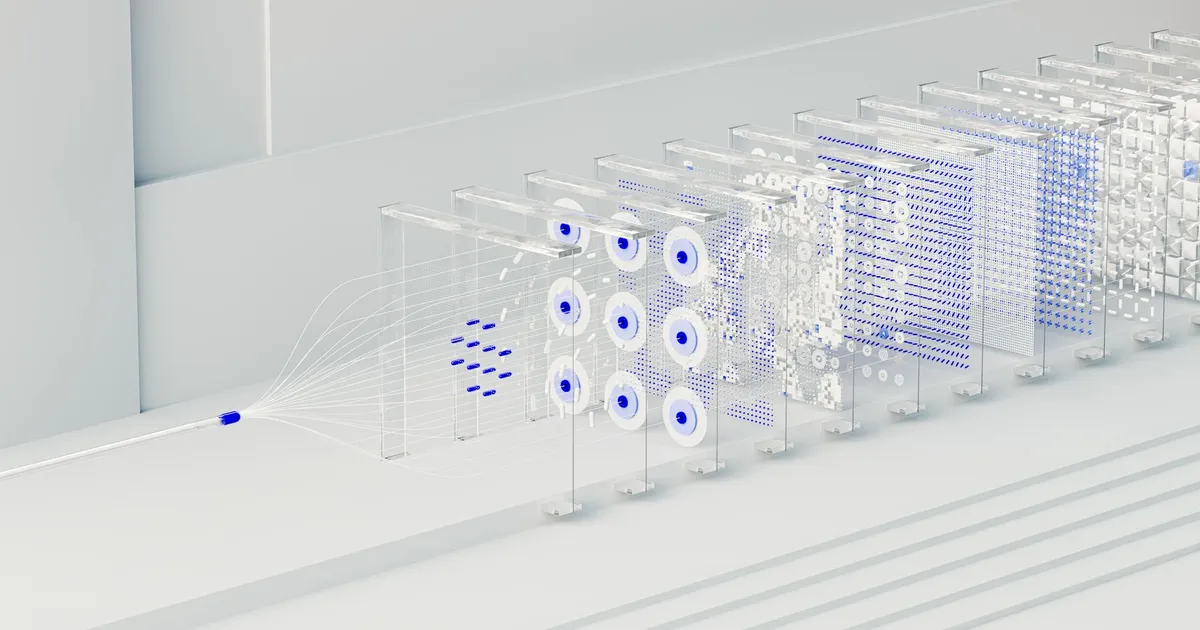

Intel's AutoRound quietly became one of the more practical quantization tools for anyone running large language models. The toolkit uses sign-gradient descent to compress LLMs and vision-language models down to 2-4 bits while keeping accuracy mostly intact. At 4-bit quantization, AutoRound retains 99.4 to 100% of BF16 accuracy according to community benchmarks. That's a meaningful bump over standard quantization methods, which typically hover around 99-99.8%.

What makes AutoRound different from GPTQ and AWQ is the optimization approach. Those methods focus on 4-bit quantization with heavier calibration requirements. AutoRound needs just 200 tuning steps and as few as 128 calibration samples to hit its numbers. The SignRoundV2 paper, published in December 2025 by Intel researchers Wenhua Cheng, Weiwei Zhang, Heng Guo, and Haihao Shen, introduced a sensitivity metric that combines gradient information with quantization deviations to automatically assign bit widths layer by layer. The results are concrete: an improved INT2 algorithm achieved 97.9% accuracy retention on DeepSeek-R1, a model that originally weighs in around 200GB.

That compression ratio matters. You can run models on hardware that would normally choke on them, such as the Qwen3.6-27B model.

Deployment flexibility is where AutoRound pulls ahead. It exports to GGUF, AutoAWQ, and AutoGPTQ formats. It landed in vLLM and Hugging Face Transformers in May 2025, then SGLang in October, and LLM-Compressor in November. Tools like TurboQuant also implement aggressive weight quantization strategies within the llama.cpp ecosystem.

For teams deploying models at scale, the math is straightforward. Shrink the model, keep the quality, run it on cheaper hardware. AutoRound isn't the only option in this space. But few competitors match its accuracy at 2-4 bits while keeping calibration this fast.