Alasdair Allan built Vera because he thinks LLMs can't handle variable names. The language, named after the Latin for truth, gets rid of them entirely. Instead of writing `divisor` or `user_count`, you write `@Int.0` and `@Int.1`. Empirical research shows models lose track of what names refer to, reuse names wrong, and pick misleading ones. Vera replaces all that with positional references, mandatory contracts verified by the Z3 SMT solver, and explicit effect declarations. If the contracts don't hold, the code doesn't compile. Everything targets WebAssembly.

The benchmarks tell a mixed story. Kimi K2.5 hit 100% on Vera's 50-problem test, beating its Python score of 86%. That's impressive, especially since no model had seen Vera before. They learned it from a single SKILL.md document at evaluation time—a technique essential for agent efficiency, similar to the Ralph methodology that breaks projects into LLM-friendly chunks. But GPT-4.1 scored 91% on Vera versus 96% on Python. Claude Sonnet 4 dropped from 96% on Python to 79% on Vera. Moonshot AI trained Kimi heavily on formal verification and theorem proving, which explains why it clicked with Vera's contract-heavy structure. The efficiency-optimized Kimi K2 Turbo only managed 83%, suggesting the full model's capacity for formal logic matters.

Hacker News commenters aren't convinced. Several argue that variable names carry semantic meaning that helps LLMs understand code, not just humans. Strip out names and you force the model to track a mental stack of positional arguments with zero clues about what each one represents. The most effective coding agents work well partly because they can read expressive, name-rich code where context lives in the text itself. Removing that context could backfire.

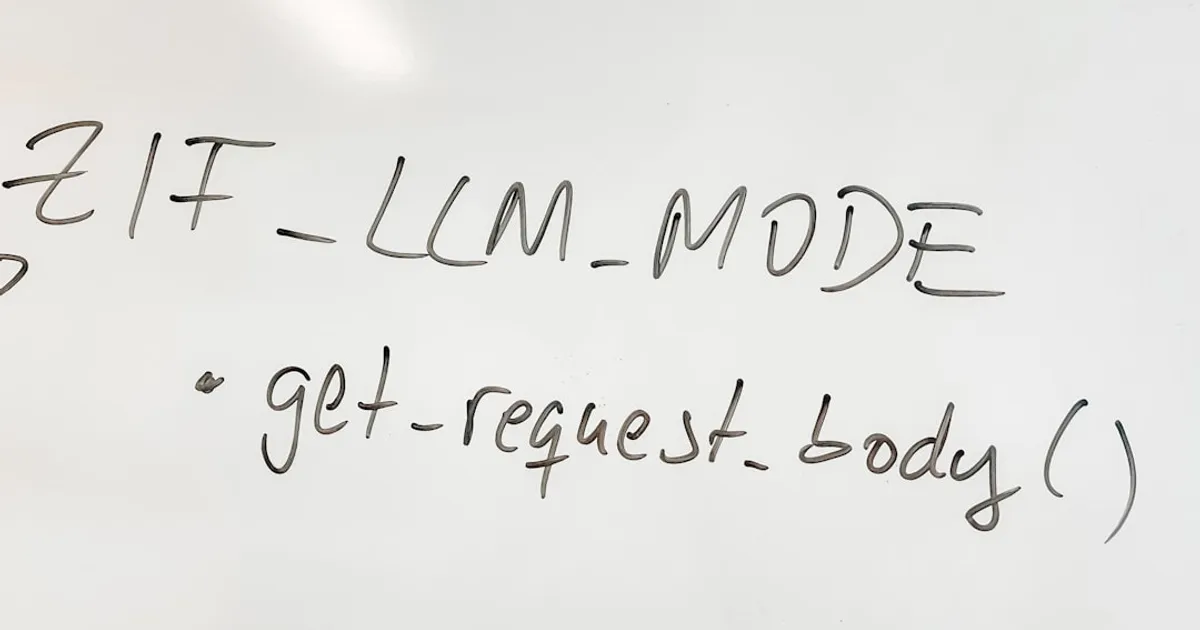

Vera sits at v0.0.127 with a 13-chapter spec, a reference compiler, and error messages written for machines, not humans. Each error comes with a stable code, a concrete fix, and a spec chapter reference. That last bit is genuinely clever. If agent-written code becomes common, compiler output designed for LLM feedback loops makes a lot of sense. Whether the no-names approach works at scale beyond models trained on formal reasoning remains an open question.