Sam Altman published OpenAI's five core principles on April 26: Democratization, Empowerment, Universal Prosperity, Resilience, and Adaptability. Altman argues that "power in the future can either be held by a small handful of companies using and controlling superintelligence, or it can be held in a decentralized way by people." He says OpenAI is committed to the latter. The company frames its massive compute investments, vertical integration, and global datacenter expansion as steps toward "universal prosperity," not market dominance.

The Hacker News community wasn't buying it.

One top comment read "FOSS || GTFO," cutting straight to the tension between OpenAI's democratization rhetoric and its closed-source, proprietary model development. Another suggested the principles "read like an April Fools Day post." Fair enough. OpenAI controls some of the most powerful AI models in existence, runs them through a closed API, and has moved further from its original open-source mission with each passing year. Telling the world you're against power consolidation while holding that power is a tough sell.

Regulators are watching. The FTC has launched investigations into AI investments and partnerships. The EU's Digital Markets Act targets the gatekeeper dynamics that OpenAI's vertical integration creates. When one company controls the compute, the models, and the consumer applications, that's a natural monopoly in the making.

No amount of principles language changes the structural reality.

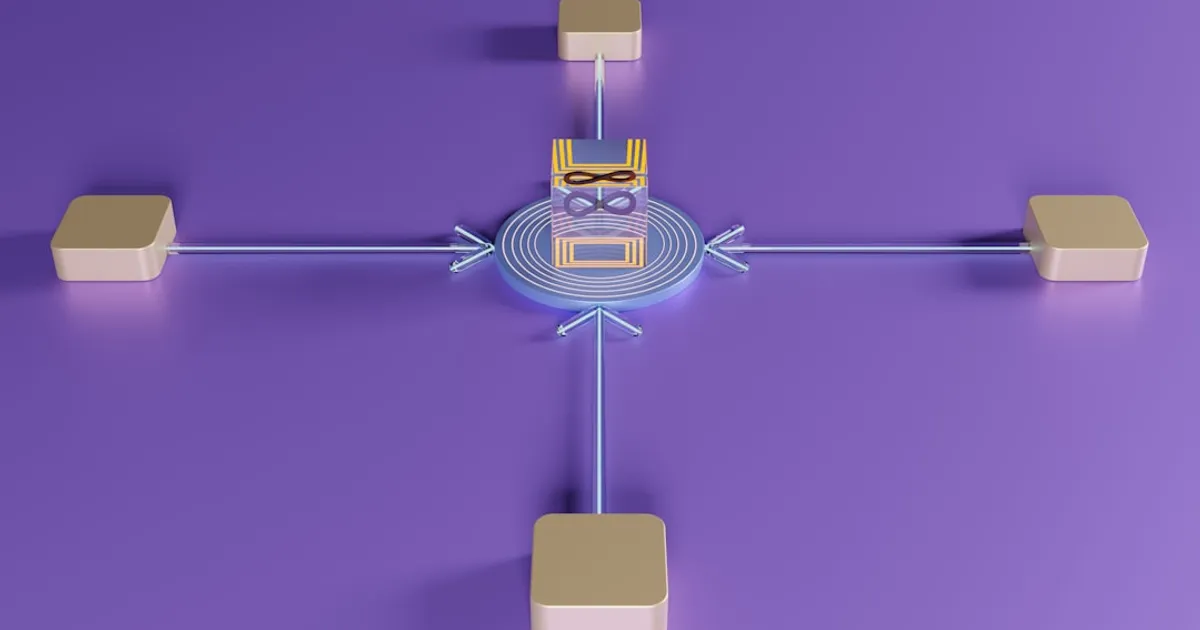

For the AI agent ecosystem, this matters. Agents built on OpenAI's stack live or die by how open that stack remains. If democratization wins out, developers get more access and more room to build. If "universal prosperity" just means "everyone uses ChatGPT," the agent ecosystem stays locked to one company's platform.

The real question isn't what Altman publishes. It's what he lets you build.