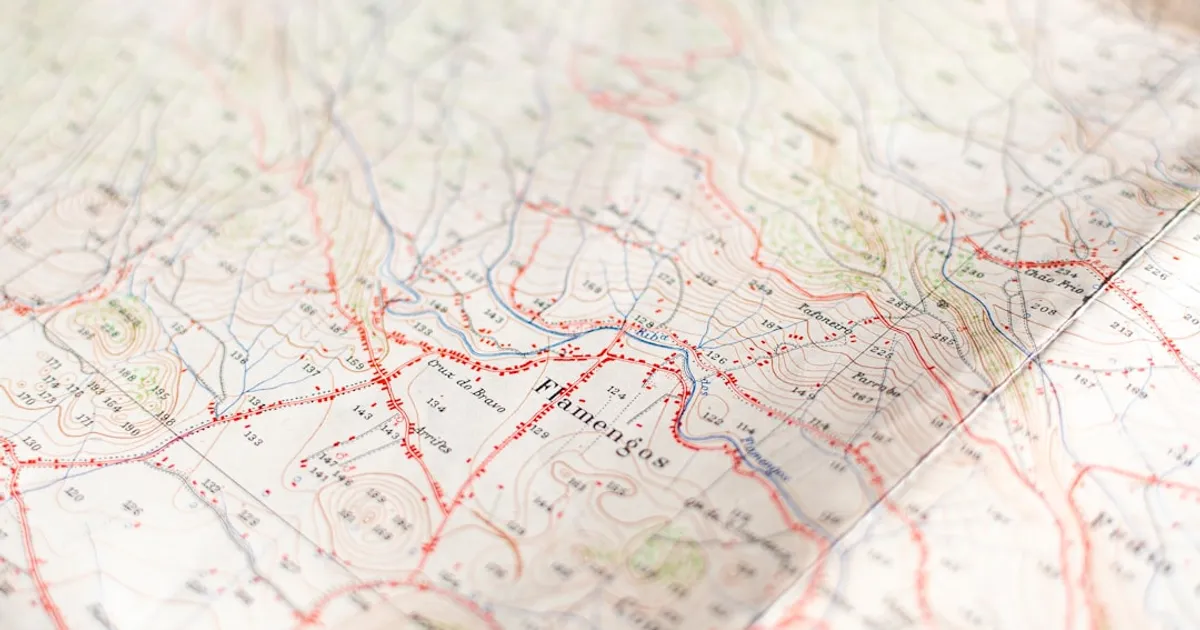

Gal Sapir wants us to think about AI models as maps. These aren't the kind you stuff in a glove compartment. They shift depending on who's looking. In a recent essay, Sapir draws on Borges' story about cartographers who built a map the size of the empire itself. A map so detailed it became useless. Maps work because they reduce complexity. When they get too faithful, they lose their point.

Sapir uses Baudrillard's four stages of representation to frame what's happening with LMs. Stage one: the image is a faithful copy. LMs were designed for this, trained to reproduce patterns in human text. But they also occupy stage two, where the image distorts reality. Ask about the 2008 financial crisis and you get a smoothed-out consensus, not the unresolved debates among actual economists. This phenomenon is increasingly seen in the scientific community, where papers that don't exist are being cited more frequently than the real sources. Stage three is where things get uncomfortable. The representation masks the absence of reality. You stop checking sources because the answer looks right. You stop exploring because the summary feels sufficient. The activity looks the same but something's been hollowed out.

The skill that matters, Sapir argues, is largely tacit. Drawing on Polanyi's observation that "we can know more than we can tell," Sapir suggests that reading LM output well means developing a feel for when a claim hasn't been verified or when the output is too smooth and needs checking against reality. It's like a developer's "code smell" or a clinician's instinct that a patient "looks sick" before the labs come back. You learn it through practice, not checklists. And because LM responses change based on who's prompting (studies show response sophistication correlates with the user's educational background), these intuitions calibrate to your personal version of the map.

The technical risk is concrete. Shumailov and colleagues documented "Model Collapse" in their 2023 paper, showing how models trained on synthetic data from other models enter a degenerative loop. Minority data disappears. Output converges to bland averages. This mirrors the challenges explored in a project that trains a model on synthetic conversations to understand how these pipelines degrade. Sapir's essay arrives at an honest conclusion: the most important skill for working with these maps can't itself be mapped. Somewhere between obsession and indifference, there's the practice of using them well while staying aware of how they change you.