LM Studio has been a go-to desktop app for running local models. Version 0.4.0 opens new territory. The new release extracts the inference engine into a standalone daemon called llmster and wraps it with a full command-line interface. No GUI required. This puts LM Studio in direct competition with Ollama and LocalAI for server deployments, CI/CD pipelines, and anyone who prefers living in the terminal.

George Liu tested the setup with Google's newly released Gemma 4 26B, a mixture-of-experts model that activates only 3.8B parameters per token despite having 26B total. On his MacBook Pro M4 Pro with 48GB RAM, it hits 51 tokens per second. That's fast for a model that benchmarks competitively against 400B+ parameter giants on reasoning tasks. The MoE architecture speeds up inference by consulting fewer parameters per pass. The downside: you still need to load all 26B weights into memory. Faster serving once loaded, but no savings on VRAM.

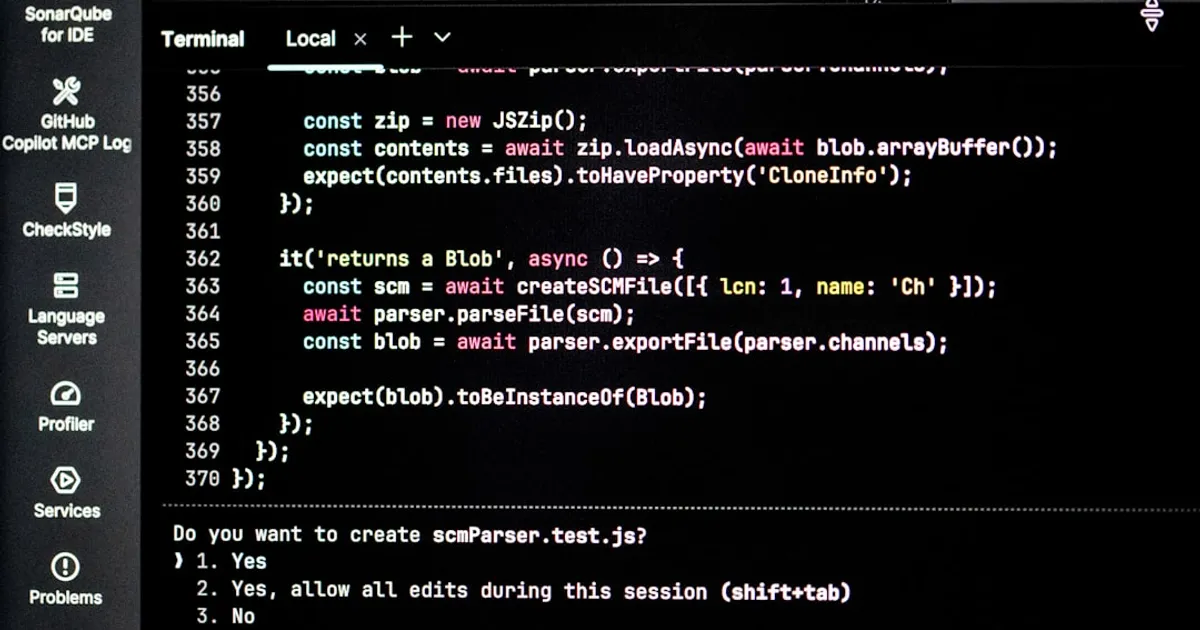

The new CLI supports continuous batching for parallel requests, a stateful REST API that maintains conversation history, and MCP integration with permission-key gating. These are features that previously required rolling your own solution on top of llama.cpp. Liu's guide covers installation and Claude Code integration. One Hacker News commenter raised a fair concern about Anthropic's historical preference for controlling usage patterns. For now, the tooling works as advertised.