A survey of perspectives from Rust project contributors and maintainers, compiled by Rust language designer Niko Matsakis on February 27, reveals a community deeply divided — but thoughtfully so — on the role of AI and LLM agents in software development. The document, which draws on a discussion that began February 6, is explicitly not an official Rust project position but rather a structured landscape of individual views ranging from genuine enthusiasm to principled skepticism. Matsakis himself described feeling "empowered" by LLM tooling, while others reported that AI produces more overhead than value for their workflows.

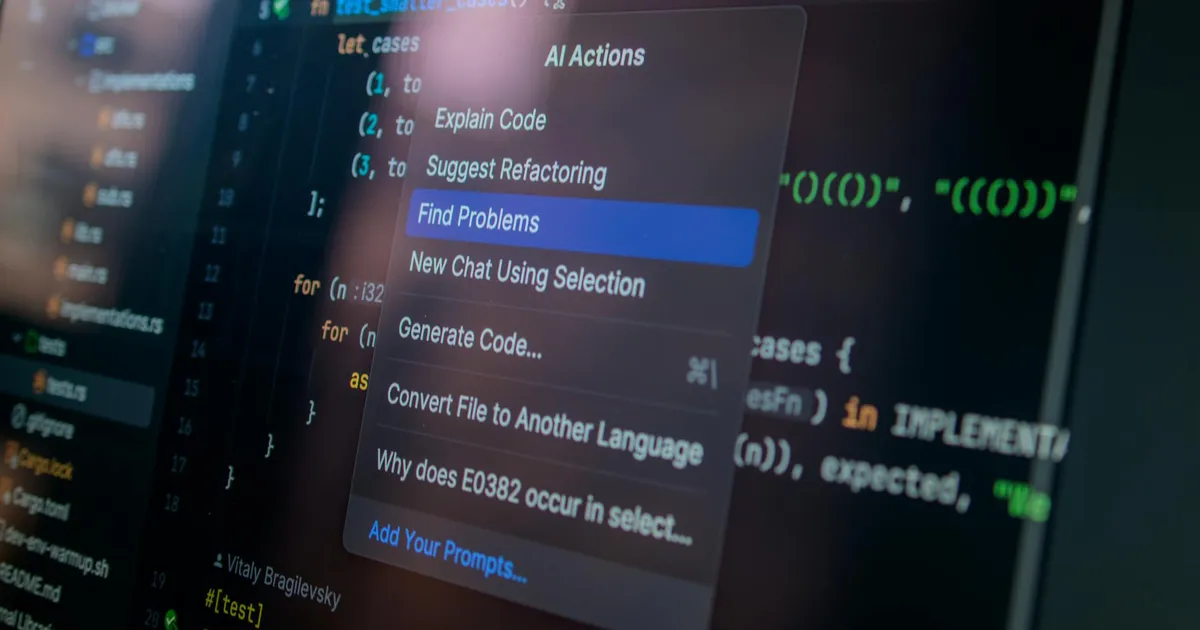

A recurring theme across the document is that AI effectiveness is inseparable from engineering discipline. Contributor TC emphasized that getting good results requires carefully structuring problems, managing context windows, and keeping models within their "flight envelope" — a point that helps explain the wide divergence in reported outcomes. Claude Code was explicitly named by contributors including kobzol as useful for refactoring, boilerplate automation, and codebase exploration. For non-coding tasks, the picture was clearer: contributor davidtwco at Arm cited internal AI tooling that makes navigating over 10,000 pages of architecture documentation tractable, while BennoLossin noted value in using LLMs as a rubber-duck debugging partner. RalfJung referenced an experiment by Linux kernel maintainers using LLM agents with carefully crafted, project-specific prompts to assist code review — noting it "cannot replace human code review and approval" but could help overloaded reviewers manage throughput.

The counterarguments were equally substantive. Multiple contributors raised concerns about skill atrophy — the risk of losing deep engagement with code when offloading cognitive work to a model. Ethical objections around training data provenance, concentration of power among a small number of AI companies, and energy consumption were recurring threads. One concern with clear long-term stakes: will agentic AI coding tools become the new proprietary compilers, infrastructure that the open-source ecosystem comes to depend on but does not control? RalfJung's suggested mitigation — self-hosted open-weight models — points to a growing interest in reducing that dependency, though he acknowledged such models are only beginning to approach proprietary quality.

Rust contributors build the toolchain, compiler, and standard library that other engineers depend on, and the community has a long tradition of prioritizing correctness over convenience. Their split verdict reflects the actual variance in outcomes with current tools, not a failure to engage seriously with them. The document's most durable finding may be the dependency question Matsakis chose to include: if AI coding infrastructure ends up controlled by a handful of companies, how well it performs today is only part of the story.