A recent opinion piece circulating on Hacker News draws a pointed distinction between what LLMs are trained on and what software engineering actually involves. The argument is straightforward: training corpora for coding models consist overwhelmingly of finished artifacts — polished repositories, Stack Overflow answers, documentation, merged code — rather than the messy, iterative process by which developers arrive at those artifacts. Models learn to imitate the shape of good code without internalizing the reasoning, backtracking, and debugging that produced it.

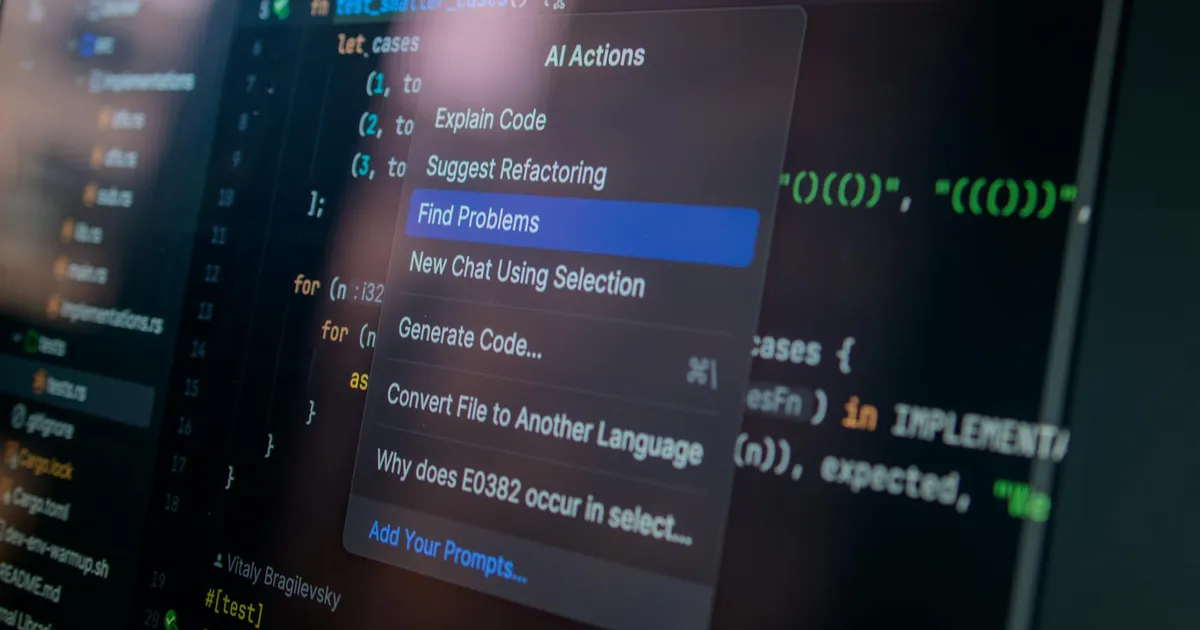

Commenters pushed back with specifics. One noted that AI labs have likely moved beyond training on HEAD commits alone, suggesting that reinforcement learning on full git histories could expose models to the trial-and-error signal missing from static snapshots. Another pointed to alternative data sources: live-coding videos on YouTube, and session recordings from AI-assisted IDEs like Cursor. The argument is that pair-programming data — which files a developer opens, which tools they switch between, how they navigate an unfamiliar codebase — captures process signal that artifact-only training misses entirely. Cursor, sitting at the intersection of developer workflow and LLM assistance, is identified as a potential generator of this training data.

A third comment offered a sharp methodological check on the whole discussion, via a Zen-inflected parable. A novice observes that an LLM adds colons to CLI output, builds a confident mechanistic theory about training on terminal screenshots rather than keystrokes, then runs the same prompt again and the behavior disappears. The moral — "you built a cage for a cloud" — is a warning against constructing explanatory frameworks from stochastic, non-reproducible observations. Single behavioral examples are weak evidence for claims about training data or model architecture, and the field has a recurring tendency to over-interpret them.

That parable is probably the most useful thing anyone said in the thread. The original post's thesis is plausible — current models do fail in ways consistent with process gaps, producing code that misses architectural intent, or debugging by pattern-matching rather than reasoning. But those failure modes fit a dozen other explanations just as well. Before git-history or IDE-telemetry training bets pay off, someone needs to establish that process data actually changes model behavior in the ways theorized — not just that it makes for a compelling story.