Neil Kakkar, a software engineer at startup Tano, published a detailed account of how he restructured his development workflow around Claude Code over roughly six weeks — framing the shift not as "using a tool that writes code" but as becoming a manager of agents doing the implementation. The core of his approach was sequential friction removal, each optimization exposing the next bottleneck in a pattern he explicitly attributes to the theory of constraints.

His first unlock was a custom Claude Code skill he calls /git-pr, which automates the full pull request lifecycle — staging, commit messages, PR descriptions, and GitHub submission — eliminating what he describes as a recurring context-switch tax that he had stopped noticing was costing him attention. He followed that by replacing Tano's existing build tooling with SWC, a Rust-based JavaScript compiler, cutting server restart times from roughly one minute to under a second.

From there, Kakkar wired Claude Code's built-in preview feature into his agent workflows so that agents self-verify UI output before marking a task complete, removing himself as a bottleneck on every UI change. He then built a port-assignment system for git worktrees — Tano's app runs a separate frontend and backend process, each requiring its own port, and parallel worktrees previously collided on shared environment variables. By assigning unique port ranges per worktree, he enabled up to ten simultaneous previews.

The combined result: five concurrent agent worktrees, each autonomously building a separate feature, with Kakkar involved heavily at the planning stage and again only at final code review. An incidental detail in the post reveals that Tano has codified Claude Code usage norms in a company-wide CLAUDE.md — suggesting this is an institutional practice rather than a personal experiment, and a concrete sign that agentic adoption is already moving from individual developers to engineering teams.

Hacker News commentary surfaced substantive skepticism. The top-voted response drew an explicit parallel to the discredited lines-of-code metric of the 1990s, noting that PR throughput without any mention of defect rates, maintenance burden, or code quality repeats a category error developers once rightly criticized management for making. A second commenter raised whether deep review of large agent-generated diffs negates the time savings, arguing that reading unfamiliar machine-generated code across multiple files may be slower than writing it would have been. A third flagged the psychological dimension: parallel agentic workflows feel productive but may produce cognitive overload that degrades the quality of oversight. That last concern carries extra weight given that Kakkar's transformation happened during his onboarding — he was reviewing agent-generated diffs of a codebase he was simultaneously still learning.

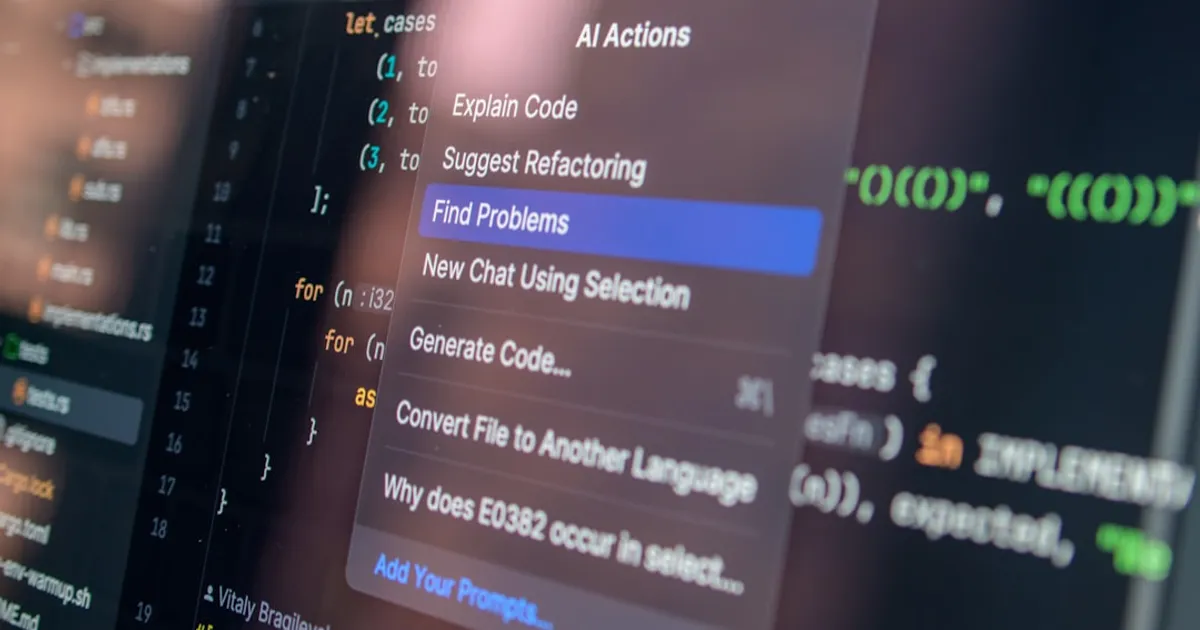

The account is most useful not as a productivity benchmark but as an early practitioner map of the infrastructure layer that agentic coding requires: custom skills, worktree isolation, port management, self-verifying agents. These are plumbing problems, not AI problems, and Kakkar's framing — that the highest-leverage work was building infrastructure rather than writing features — suggests that teams adopting agentic workflows will need dedicated investment in developer tooling before throughput gains become real. His six-week record is one of the more detailed ones published so far. The missing data — defect rates, maintenance cost, review hours — is what the next wave of posts will need to provide.