An open-source npm package called open-terminal (@hasna/terminal) claims to cut LLM token consumption by 70 to 90 percent per agent session by inserting a cheap inference layer between shell output and the frontier model. The figures in this article come from the project's GitHub README, which was accessible despite the original Hacker News submission's linked article returning no content.

The core architecture is a two-tier inference pattern. Open-terminal intercepts shell command output and runs it through Cerebras's API — specifically Qwen-3-235B on Cerebras's wafer-scale silicon — as a preprocessing step before the results reach a more expensive model like Claude Sonnet. According to the README, each Cerebras summarization call costs roughly $0.001 and the project claims that saves approximately $0.029 in Sonnet tokens, a 21x return. At 500 commands per day, it estimates $41 per month saved per agent instance. Cerebras offers a free tier, which lowers the barrier to testing at low volumes.

The preprocessing pipeline filters noise, rewrites commands, summarizes output using the cheap model, and converts results to structured JSON before the frontier model sees them. The README includes benchmarks on a TypeScript monorepo: 80 percent token reduction on grep output, 98 percent on repeated test runs using a diff-only mode, and 73 percent aggregate across 10 real-world commands. These are project-reported figures and haven't been independently verified.

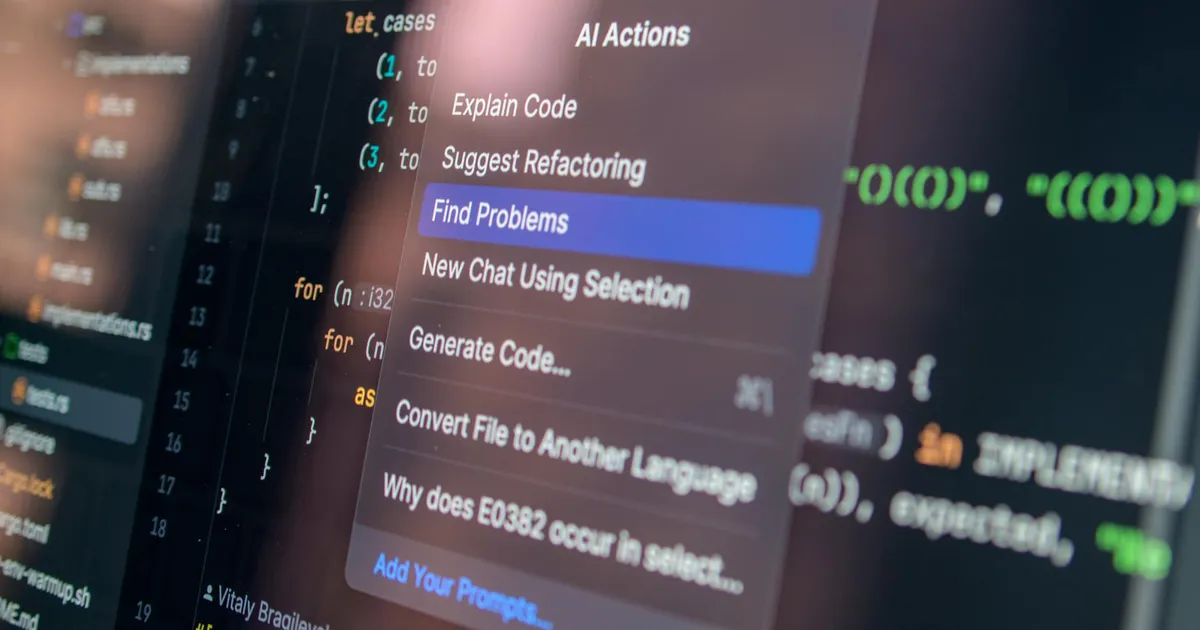

Beyond the cost mechanics, open-terminal exposes more than 20 Model Context Protocol tools: command execution, semantic code search via AST parsing, symbol-level file reading, git state summaries, and a token economy dashboard with ROI tracking. It installs as an MCP server for Claude Code, OpenAI Codex, and Gemini CLI. The tool also accepts natural language instructions from human users, translating plain-English commands into shell commands — an angle that extends its audience beyond agent infrastructure teams.

One commenter in the Hacker News thread, identified as Orchestrion, said they're independently building a similar protocol for agent token efficiency. That's one data point, not a trend, but it suggests others are working on the same problem. Whether cheap-inference preprocessing becomes a standard layer in agent toolchains depends on whether the benchmarks hold up outside the README's test conditions.