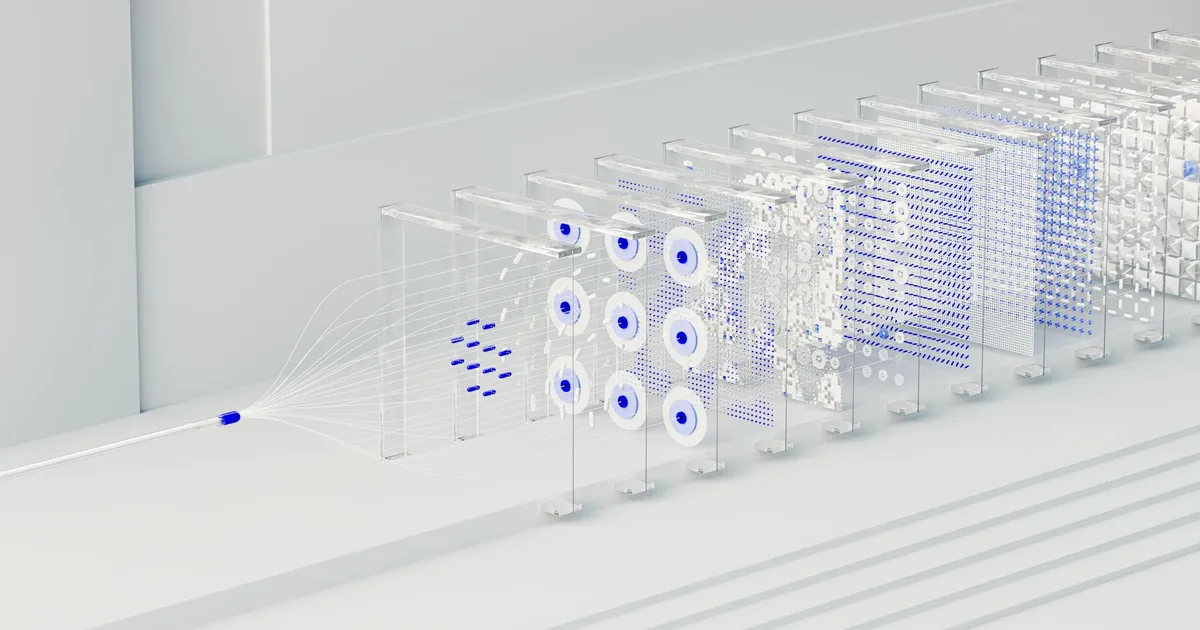

Mistral AI has released Mistral Small 4, a 119-billion-parameter Mixture-of-Experts model under the Apache 2.0 license that consolidates three previously separate specialized models into a single deployment. The model combines Magistral's advanced reasoning, Pixtral's multimodal vision capabilities, and Devstral's agentic coding functionality, eliminating the need for developers to route workloads across different models. Its MoE architecture activates only 4 of 128 experts per token — 6B active parameters per token — while supporting a 256k context window.

The model ships with a configurable reasoning_effort parameter, letting users dial between fast low-latency responses and deep step-by-step reasoning without switching models. Mistral reports a 40% reduction in end-to-end latency and 3x more throughput versus Mistral Small 3. On LiveCodeBench, Mistral Small 4 generates 3.5 to 4 times fewer output characters than comparable Qwen models — a meaningful cost difference at inference scale.

The launch also marks Mistral's entry as a founding member of the NVIDIA Nemotron Coalition, an eight-member alliance that includes Black Forest Labs, Cursor, LangChain, Perplexity, Reflection AI, Sarvam, and Thinking Machines Lab. The coalition's stated goal is to co-develop open frontier models trained on NVIDIA DGX Cloud compute, with Mistral and NVIDIA co-developing the base model underpinning the NVIDIA Nemotron 4 family. NVIDIA CEO Jensen Huang described open models as "the lifeblood of innovation," while Mistral CEO Arthur Mensch framed the partnership as an infrastructure play: "Open frontier models are how AI becomes a true platform."

For teams building agent pipelines, Mistral Small 4 offers a single self-hostable model that handles chat, vision, and multi-step coding agents without model-switching overhead. Deployment is supported via vLLM, SGLang, llama.cpp, Hugging Face Transformers, and NVIDIA NIM on day zero. The minimum bar — 4x NVIDIA HGX H100 or equivalent — keeps on-premises use firmly in enterprise territory. Teams not running their own inference can reach the model through Mistral's Le Chat platform and API.