Stavros Korokithakis, a freelance Python developer, published a detailed account in March 2026 of his production multi-agent LLM workflow, showing how experienced engineers are actually building software with AI today. His pipeline, orchestrated through OpenCode, assigns distinct roles to models from competing AI companies: Claude Opus 4.6 serves as an architect agent for planning and system design, Claude Sonnet 4.6 handles implementation, and reviewer agents from OpenAI (Codex) and Google (Gemini) alongside Anthropic's Opus provide critique. The cross-company composition is a core architectural decision. Korokithakis argues that single-model review loops suffer from self-agreement bias: a model will rarely robustly critique its own output or that of a closely related sibling, making provider diversity a functional requirement rather than a preference.

The workflow has produced real, daily-use software rather than demos. Korokithakis describes Stavrobot, a security-focused personal AI assistant that manages his calendar, conducts research, handles chores autonomously, and even extends itself by writing new code. He has also built Middle, a voice note pendant that transcribes recordings and routes them to his LLM assistant, and Pine Town, an infinite multiplayer canvas. He reports sustaining codebases to tens of thousands of source lines over weeks with consistent reliability, a direct counter to common critiques about LLM-generated code becoming unmaintainable at scale. He qualifies the claim: projects in technologies he already understands well stay stable, while unfamiliar domains like mobile development still degrade into poor architectural choices. LLMs, in his account, sharpen what a developer already knows rather than substitute for knowledge they don't have.

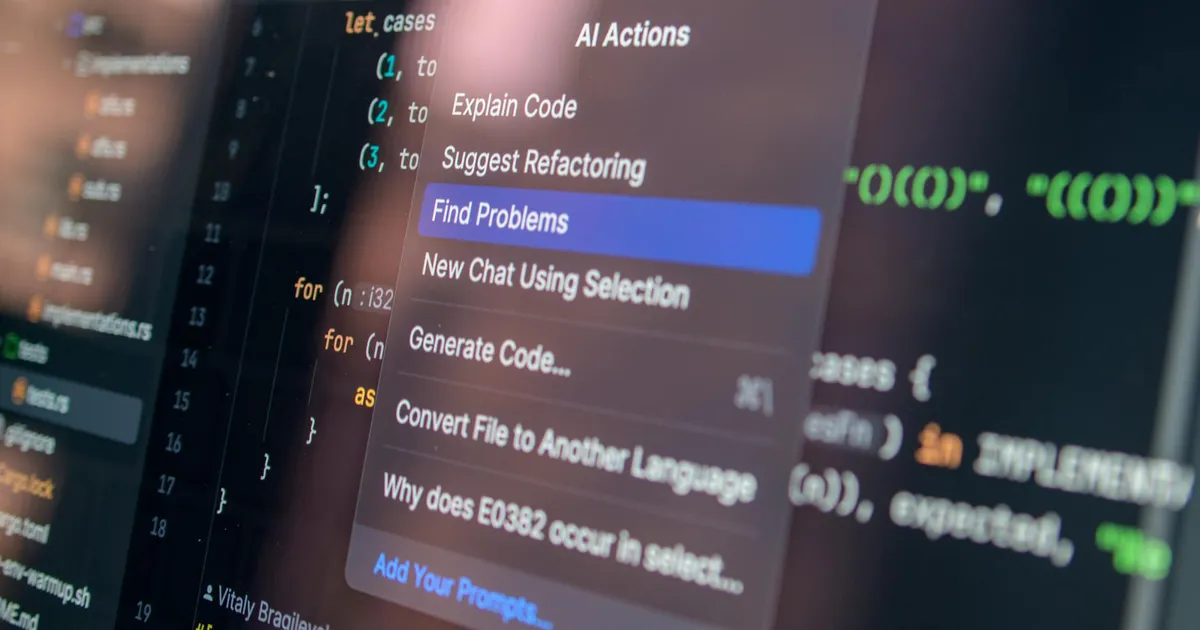

The workflow's dependency on multi-provider model access is what drives Korokithakis to OpenCode specifically. First-party tools like Claude Code, Codex CLI, and Gemini CLI are commercially incentivized to route inference to their parent company's models, and their orchestration features are optimized for single-provider workflows. OpenCode, built by Anomaly (the team behind SST and models.dev), is explicitly provider-neutral, supports 75-plus LLM providers, and enables custom agent definitions that can autonomously call one another. Since its April 2025 launch it has reached 122,000 GitHub stars and roughly five million monthly developers — numbers that suggest OpenCode has found a real audience as a neutral coordination layer for multi-model pipelines, a role Anomaly appears to be deliberately cultivating as infrastructure rather than as a consumer AI product.

Korokithakis closes with a thesis on the shifting nature of engineering skill. The ability to write correct code, he argues, is becoming less valuable; the ability to architect systems correctly, make sound technology choices, and understand how components interact has become dramatically more important. He anticipates that even architectural oversight may become unnecessary within roughly a year, but stresses that the current state still requires a skilled human in the loop. For practitioners tracking the AI agent space, his post is notable less for its optimism, which is common, and more for its specificity: concrete tooling choices, named model versions, real projects, and an honest accounting of where the workflow still fails.