A peer-reviewed study from Carnegie Mellon University, accepted at the 23rd International Conference on Mining Software Repositories (MSR '26), provides the first large-scale causal evidence of how Cursor AI affects real-world open-source software development. Led by Hao He, Courtney Miller, Shyam Agarwal, Christian Kästner, and Bogdan Vasilescu, the research used a difference-in-differences design to compare Cursor-adopting GitHub projects against a matched control group of similar non-adopting projects. The headline finding: Cursor adoption produces a statistically significant but transient boost in development velocity, while simultaneously generating a substantial and persistent increase in static analysis warnings and code complexity. Critically, panel generalized-method-of-moments estimation reveals that these quality degradations are themselves major drivers of long-term velocity slowdown — meaning the initial speed gains eventually compound into a productivity bottleneck.

The study's strength is its causal design rather than simple correlation. Researchers measured code complexity, static analysis warnings collected via a local SonarQube server, duplicate line density, and technical debt across the studied projects. The causal graph they propose links LLM agent use positively to short-term velocity, but negatively — via accumulating quality metrics — to velocity over time. The paper explicitly calls for quality assurance to be a "first-class citizen" in the design of agentic AI coding tools and AI-driven workflows, a conclusion that applies directly to GitHub Copilot and Windsurf as much as to Cursor.

Hacker News commentary on the study flagged a key limitation: none of the projects in the study had SonarQube integrated into their Cursor agent pipelines, meaning the agents generating warnings received no feedback about the problems they were creating. Critics argue this represents a tooling integration gap rather than a fundamental limitation of LLM agents — a closed feedback loop between static analysis and the agent itself would likely auto-correct many of the flagged issues. Commenters also noted that increased code complexity may carry a lower cost than it once did, since modern LLM agents can explain, test, and refactor complex code far more cheaply than developers working alone.

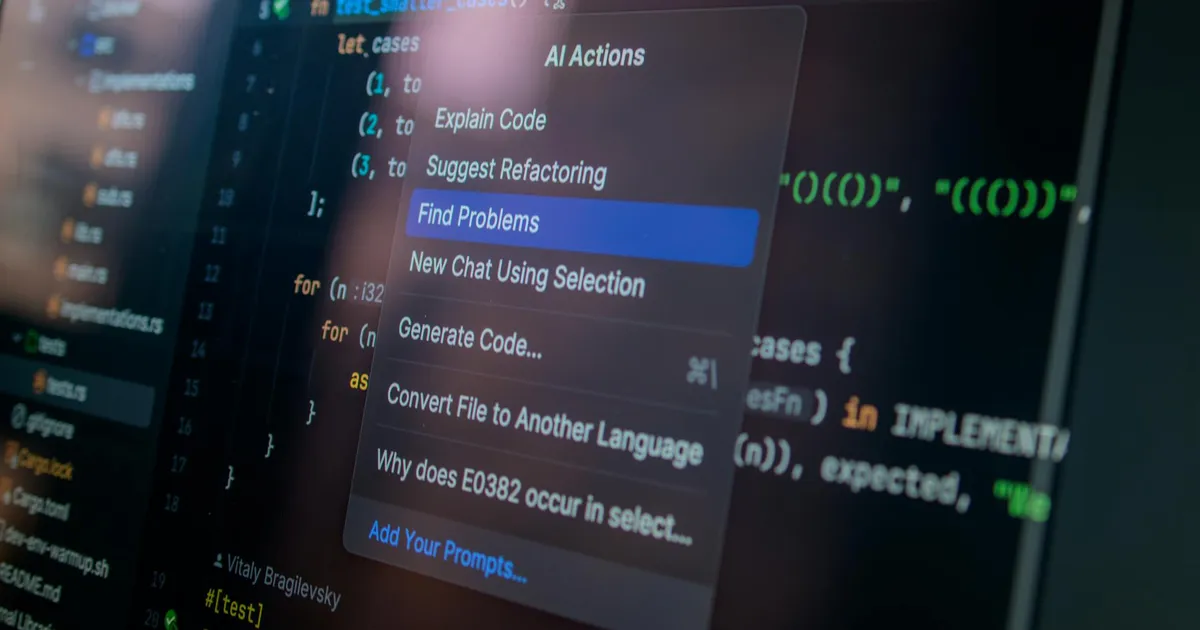

Anysphere's own product trajectory maps closely onto the study's conclusions. Cursor's 1.0 release in June 2025 shipped BugBot, an automated PR-review agent that integrates with GitHub and flagged 1.5 million potential issues across over one million pull requests during its beta period. Anysphere subsequently acquired code-review platform Graphite and is merging it with BugBot to build a more comprehensive quality layer around AI-generated code. CEO Michael Truell himself warned in December 2025 that "vibe coding" — blindly trusting AI output — builds "shaky foundations" that eventually "crumble," a framing that closely mirrors the CMU study's core conclusions. Whether or not Truell was responding directly to the research, Anysphere's strategic direction suggests the company is acutely aware that accelerating code output without policing its quality is the central challenge its product now faces.