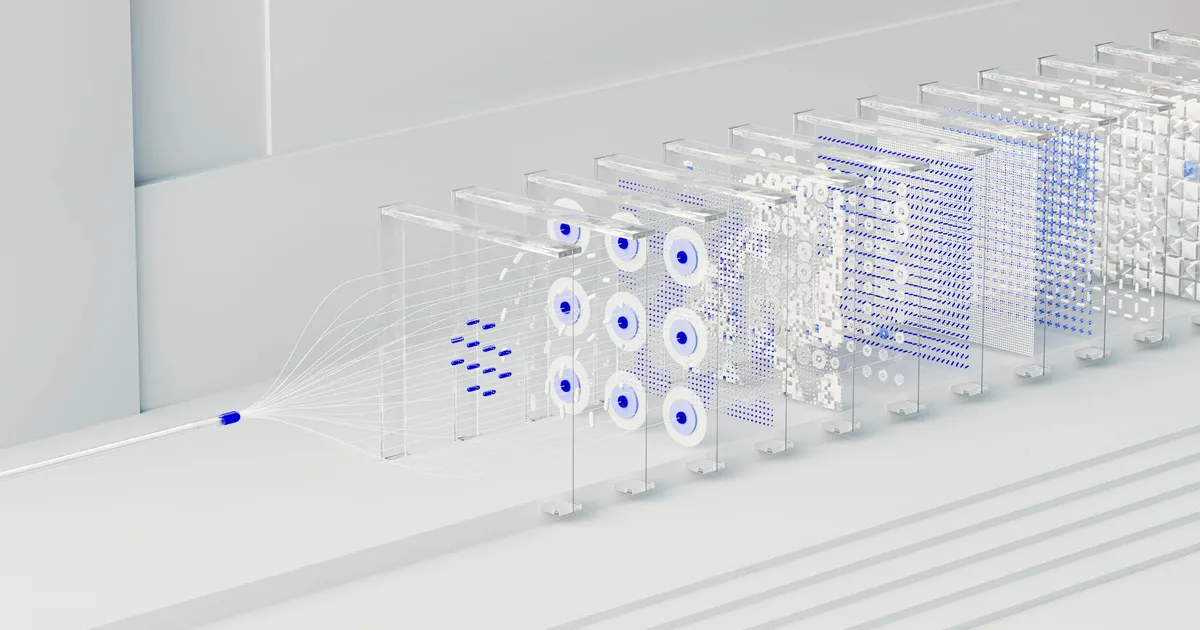

AutoResearchClaw, released by the aiming-lab organization on GitHub, automates the full academic paper production cycle from a single natural language prompt. Built on GPT-4o with gpt-4o-mini as a fallback, the system runs a 23-stage, 8-phase pipeline: literature discovery via arXiv and Semantic Scholar, hypothesis generation, sandbox experimentation with hardware auto-detection, statistical analysis, chart generation, multi-agent peer review, and LaTeX compilation targeting NeurIPS, ICML, and ICLR templates. One CLI command with --auto-approve takes a topic to a deliverable with no human in the loop.

The architecture does more than generate text. A 4-layer citation verification system cross-checks all references against arXiv, CrossRef, DataCite, and an LLM judge — directly targeting the hallucinated citations that undermine most AI-generated research content. A PIVOT/REFINE decision loop at Stage 15 lets the system change research direction based on experimental outcomes rather than executing a fixed linear plan. Per-run lessons are extracted and stored with 30-day time-decay weighting, so the pipeline improves across runs. A Sentinel watchdog monitors quality throughout, flagging NaN/Inf anomalies, paper-evidence inconsistencies, and citation relevance issues.

The project also integrates with OpenClaw, an open-source personal AI assistant framework that the project README describes as sponsored by OpenAI and Vercel — a claim Agent Wars has not independently verified. OpenClaw operates across WhatsApp, Telegram, Slack, and Discord; through the integration, a user can reportedly trigger a full paper generation run by sending a single chat message. An optional bridge adapter adds scheduled runs via cron, cross-session memory persistence, parallel sub-session spawning, and live web fetch during literature review. Whether these integrations perform as documented has not been independently tested.

The project reports 1,039 passing tests and ships documentation in nine languages. The detail that matters most is simpler: AutoResearchClaw targets NeurIPS, ICML, and ICLR by name, not generic publishing. Those venues run double-blind review and treat originality as a core criterion. A system capable of producing a plausible submission — real citations, sandbox-generated charts, peer-reviewed by other agents — is a direct stress test of conference infrastructure that was not built to detect machine-generated research. Academic publishers are already debating policy; tools like this are making that debate urgent.