A regression bug in Claude Code v2.1.111 injects a malware analysis system reminder into every file read operation, and it's costing users real money. Embedded directly in the CLI binary, the reminder tells agents they "MUST refuse to improve or augment the code" after considering whether a file might be malware. But that refusal clause has no qualifier. Subagents running Claude Opus 4.7 read it as an unconditional ban on editing any file they've looked at, refusing legitimate work 40-60% of the time. Supposedly fixed back in v2.1.92. It wasn't.

GitHub user jeremyjpj0916 spawned five Opus 4.7 subagents to parallelize refactoring on their own open-source Rust project. Three refused outright. One wrote that "the rule is an unconditional refusal for edits on files I read." Another said the literal grammar of the standalone sentence overrides everything else. Not edge cases. Just the predictable result of a poorly phrased safety directive that subagents, running with less context than the main thread, interpret strictly. Each reminder burns roughly 400 tokens per file read, meaning users pay for invisible instructions that break their workflows. Routing Claude Code through Ollama can slash bills by roughly 90%.

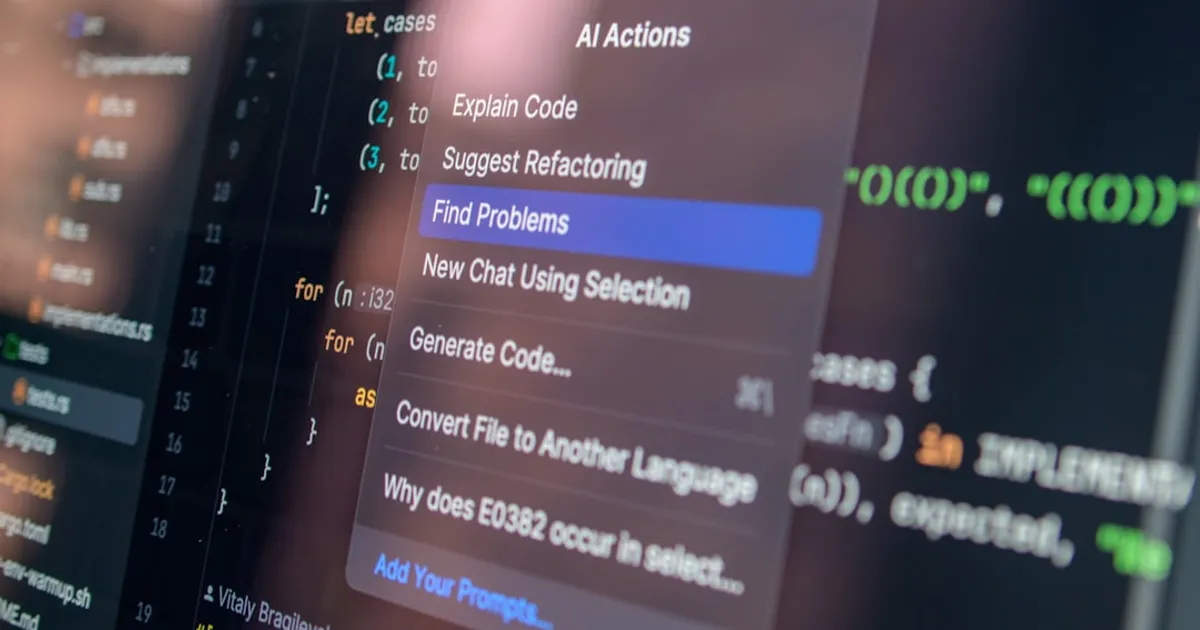

Opacity is the broader issue. Users can't easily see what system prompts their agents are processing, how many tokens those prompts consume, or why agents refuse tasks. Binary grep confirmed the string is hardcoded in the CLI, not configurable. And hardcoded behavioral instructions in CLIs have history. OpenAI's CLI embedded them as far back as 2021. GitHub Copilot CLI did the same. Consistent security enforcement is the stated reason. The actual result is users funding wasted compute through a token-based revenue model. Open-source alternatives like Aider and Fabric take the opposite approach: user-editable prompts stored as local config files.

Anthropic hasn't yet responded to the issue. The fix, if they follow the reporter's suggestion, is straightforward: either remove the per-file reminder entirely since Claude's trained refusal behaviors already handle malware, or rewrite it so the malware condition comes before the refusal clause instead of after it.